Regarding dim=-1 in fastai/courses/dl1/lesson6-rnn.ipynb

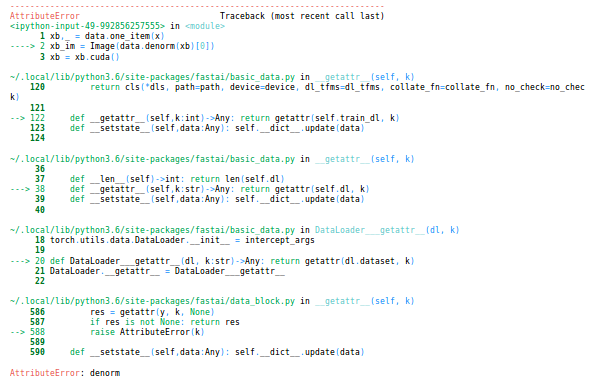

Has anybody else run into this error?:

TypeError: log_softmax() got an unexpected keyword argument 'dim'

It happens in the last line in the rnn models where the softmax is called:

def forward(self, *cs):

bs = cs[0].size(0)

h = V(torch.zeros(bs, n_hidden).cuda())

for c in cs:

inp = F.relu(self.l_in(self.e(c)))

h = F.tanh(self.l_hidden(h+inp))

return F.log_softmax(self.l_out(h), dim=-1) <===

Looks like it should be changed to:

return F.log_softmax(self.l_out(h)) <===

Looking at this thread:

it seems that this parameter has been changed in pytorch and should be removed from CharLoopModel, CharLoopConcatModel and CharRnn. It seems that probably the most up-to-date notebook is not in github (?). For now in my local copy I have just removed it. @jeremy do you have a more up-to-date version from the lesson that you could check in?

Thanks

)

)

. It’s clearer now but I still feel like I’m missing some pieces such as “what is a latent factor” and few other details. I’ll find them by myself and come back to your explanation. That really helps, thanks a lot

. It’s clearer now but I still feel like I’m missing some pieces such as “what is a latent factor” and few other details. I’ll find them by myself and come back to your explanation. That really helps, thanks a lot