It’s because you connect n_factors nodes to n_weights` in the next layer. The Weight Matrix doesn’t depend on the Number of input users, but only on the number of factors. It’s of shape - n_factors x n_weights

Thanks for explaining @ramesh. I was distracted/confused by the embedding size and didn’t have a sense of the size of the network layer.

Sure then just check out the source code for the class we used. It’s pretty short. Just ask if you have questions about it.

Is there a way in fastai to add Dropout on the go?

Like we do with data using learn.set_data(), is there a similar way to set dropout ps in the same learn object, thereby avoiding a new model creation and starting from scratch?

Honestly, I was thinking the same thing – all those Excel tricks would make a great Youtube lecture series.

I’ve been going back and forth like this :

??ColumnarModelData.from_data_frames

??ColumnarModelData

??ModelData

??ColumnarDataset.from_data_frames

??ColumnarDataset

??Dataset

??MixedInputModel

??fit

??EmbeddingDotBias

??CollabFilterDataset

??ColumnarModelData

??CollabFilterLearner

Couldn’t figure out how to get that iterable input tensor, which is like

[

…

LongTensor,

…

LongTensor,

…,

FloatTensor]

Ah well you definitely want to learn how to use the python debugger. Set a breakpoint in ColumnarModelData using the method shown here pdb – Interactive Debugger - Python Module of the Week , and then step through the code. Use print in the debugger to see what the values of the various parameters and attributes are. You’ll be able to watch the DataLoaders get created!

I’m happy to walk you through this BTW, but I suspect you’ll find it quite a bit more useful to learn to do this yourself.

Also, you should invest some time in learning to use your editor to jump to the definition of a method. You can use the Sourcegraph chrome extension to do that in the github repo directly, but better is to use your editor. What editor do you use?

No, you have to change the actual model to add dropout.

I was using jupyter to look at source code for fastai so far. Other than that we’ve used pycharm with in Prof. Parr’s classes. I haven’t done much source code inspection before so I don’t have any preference, I will start learning with your tips. Thanks

You can use pycharm to jump to definitions - it’s a great editor for that

Hi guys!

I’m getting an error using my own dataset when I tried execute fit from the learner from CollabFilterDataset:

TypeError: mul received an invalid combination of arguments - got (numpy.int64), but expected one of:

* (float value)

didn't match because some of the arguments have invalid types: (numpy.int64)

* (torch.cuda.FloatTensor other)

didn't match because some of the arguments have invalid types: (numpy.int64)

The stack trace: https://gist.github.com/thiagolcks/3849fcc5eb43eaaf2c0fb4032a4073d0

I’ve tried to load the dataset from CSV and from dataframe, same result.

Any ideia?

I’ve found the issue! My ratings were integers, when I changed them to float it worked like a charm:

ratings['rating'] = ratings['rating'].astype(float)

If you have the time and interested, a PR which cast the ratings to floats in that class would be a nice addition…

Created one …

But by default they were of float type…

Is it possible to extract the outputs from a fine-tuned model at some arbitrary layer i.e. the layer before the classification layer?

To be clear, I don’t want to just use a pre-trained imagenet model without the top layer (i.e. bottleneck features), but instead I want to do this with a model that I have already fine-tuned to a specific dataset.

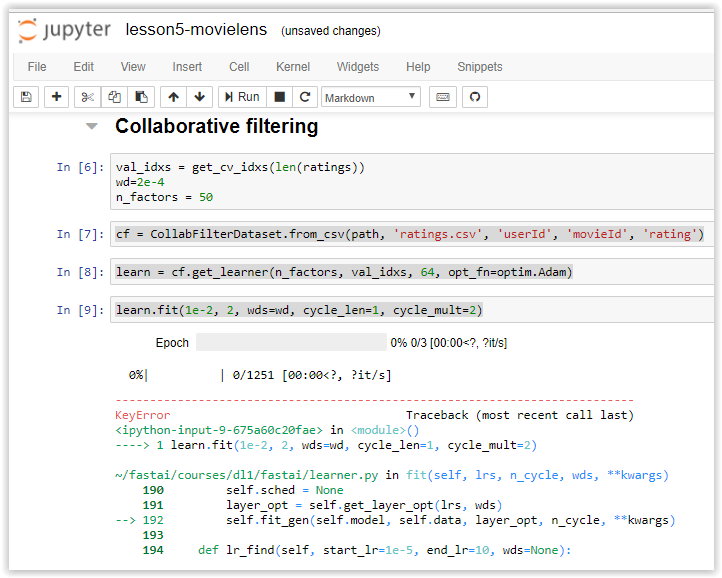

Getting an error tonight (12/03/2017 in USA) when trying to run the movelens data. I had performed a git pull and conda env update before running the notebook:

The full trace:

---------------------------------------------------------------------------

KeyError Traceback (most recent call last)

<ipython-input-9-675a60c20fae> in <module>()

----> 1 learn.fit(1e-2, 2, wds=wd, cycle_len=1, cycle_mult=2)

~/fastai/courses/dl1/fastai/learner.py in fit(self, lrs, n_cycle, wds, **kwargs)

190 self.sched = None

191 layer_opt = self.get_layer_opt(lrs, wds)

--> 192 self.fit_gen(self.model, self.data, layer_opt, n_cycle, **kwargs)

193

194 def lr_find(self, start_lr=1e-5, end_lr=10, wds=None):

~/fastai/courses/dl1/fastai/learner.py in fit_gen(self, model, data, layer_opt, n_cycle, cycle_len, cycle_mult, cycle_save_name, metrics, callbacks, use_wd_sched, **kwargs)

137 n_epoch = sum_geom(cycle_len if cycle_len else 1, cycle_mult, n_cycle)

138 fit(model, data, n_epoch, layer_opt.opt, self.crit,

--> 139 metrics=metrics, callbacks=callbacks, reg_fn=self.reg_fn, clip=self.clip, **kwargs)

140

141 def get_layer_groups(self): return self.models.get_layer_groups()

~/fastai/courses/dl1/fastai/model.py in fit(model, data, epochs, opt, crit, metrics, callbacks, **kwargs)

80 stepper.reset(True)

81 t = tqdm(iter(data.trn_dl), leave=False, total=len(data.trn_dl))

---> 82 for (*x,y) in t:

83 batch_num += 1

84 for cb in callbacks: cb.on_batch_begin()

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/tqdm/_tqdm.py in __iter__(self)

951 """, fp_write=getattr(self.fp, 'write', sys.stderr.write))

952

--> 953 for obj in iterable:

954 yield obj

955 # Update and possibly print the progressbar.

~/fastai/courses/dl1/fastai/dataloader.py in __iter__(self)

64 def __iter__(self):

65 with ThreadPoolExecutor(max_workers=self.num_workers) as e:

---> 66 for batch in e.map(self.get_batch, iter(self.batch_sampler)):

67 yield get_tensor(batch, self.pin_memory)

68

~/src/anaconda3/envs/fastai/lib/python3.6/concurrent/futures/_base.py in result_iterator()

584 # Careful not to keep a reference to the popped future

585 if timeout is None:

--> 586 yield fs.pop().result()

587 else:

588 yield fs.pop().result(end_time - time.time())

~/src/anaconda3/envs/fastai/lib/python3.6/concurrent/futures/_base.py in result(self, timeout)

423 raise CancelledError()

424 elif self._state == FINISHED:

--> 425 return self.__get_result()

426

427 self._condition.wait(timeout)

~/src/anaconda3/envs/fastai/lib/python3.6/concurrent/futures/_base.py in __get_result(self)

382 def __get_result(self):

383 if self._exception:

--> 384 raise self._exception

385 else:

386 return self._result

~/src/anaconda3/envs/fastai/lib/python3.6/concurrent/futures/thread.py in run(self)

54

55 try:

---> 56 result = self.fn(*self.args, **self.kwargs)

57 except BaseException as exc:

58 self.future.set_exception(exc)

~/fastai/courses/dl1/fastai/dataloader.py in get_batch(self, indices)

60 def __len__(self): return len(self.batch_sampler)

61

---> 62 def get_batch(self, indices): return self.collate_fn([self.dataset[i] for i in indices])

63

64 def __iter__(self):

~/fastai/courses/dl1/fastai/dataloader.py in <listcomp>(.0)

60 def __len__(self): return len(self.batch_sampler)

61

---> 62 def get_batch(self, indices): return self.collate_fn([self.dataset[i] for i in indices])

63

64 def __iter__(self):

~/fastai/courses/dl1/fastai/column_data.py in __getitem__(self, idx)

11

12 def __len__(self): return len(self.y)

---> 13 def __getitem__(self, idx): return [o[idx] for o in self.xs] + [self.y[idx]]

14

15 @classmethod

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/pandas/core/series.py in __getitem__(self, key)

621 key = com._apply_if_callable(key, self)

622 try:

--> 623 result = self.index.get_value(self, key)

624

625 if not is_scalar(result):

~/src/anaconda3/envs/fastai/lib/python3.6/site-packages/pandas/core/indexes/base.py in get_value(self, series, key)

2555 try:

2556 return self._engine.get_value(s, k,

-> 2557 tz=getattr(series.dtype, 'tz', None))

2558 except KeyError as e1:

2559 if len(self) > 0 and self.inferred_type in ['integer', 'boolean']:

pandas/_libs/index.pyx in pandas._libs.index.IndexEngine.get_value()

pandas/_libs/index.pyx in pandas._libs.index.IndexEngine.get_value()

pandas/_libs/index.pyx in pandas._libs.index.IndexEngine.get_loc()

pandas/_libs/hashtable_class_helper.pxi in pandas._libs.hashtable.Int64HashTable.get_item()

pandas/_libs/hashtable_class_helper.pxi in pandas._libs.hashtable.Int64HashTable.get_item()

KeyError: 1230

Tried Restarting the kernel?

Have a look at this notebook contributed by merajat

Hello, I do not have excel from Microsoft and tried to open the excel sheet collab_filter.xlsx of @jeremy with Open Office and Google Office without success. Is there any other possibilities ?

@pierreguillou you can open this excels with Libreoffice or Openoffice but macros will not work. (And you need Jeremy’s macros to make this notebooks work). Problem is that macro languaje of excel is propietary and different to the one used in open source programs.

So… as far as I know, unless you edit this macros and adapt them to Openoffice or Libreoffice I dont think there is much to do. A pity cause MS makes you buy all the package office360 that is an overkill if you just want to run this notebooks. An option, maybe, get the trial version?