Hello everyone,

First of all thanks for the amazing courses and library, it is a revelation!

I am currently trying to implement the language model approach, shown in Lesson 4, on a specific urban dataset that we have collected. The dataset is stored in a DataFrame and has a pretty straightforward structure: each line in the dataset represents a different geographic location and each location has a description column that contains a list of strings ‘seen’ in that location. The df is quite large, approximately 270k rows and the total token count is around 60m words . I have previously run other NLP type models (eg. doc2vec, tfidf approaches with ML and DL, etc.) without any (serious) memory issues. However those approaches have internal ways of limiting memory requirements (eg. max_vocab size, etc.).

Since this dataset is in a dataframe, I’m using the .from_dataframes option, while passing the same settings for bs(=64) and bptt(=70) as in the course. However, when I try to generate the vocabulary (md command) I get an out of memory error (changing min_freq value to 50 didn’t seem to help). Is there any way apart from preprocessing the text, which I’d like to avoid for a language model, to generate a vocabulary with less memory requirements ?

Hope this was clear enough. Thanks in advance!

Kind regards,

Theodore.

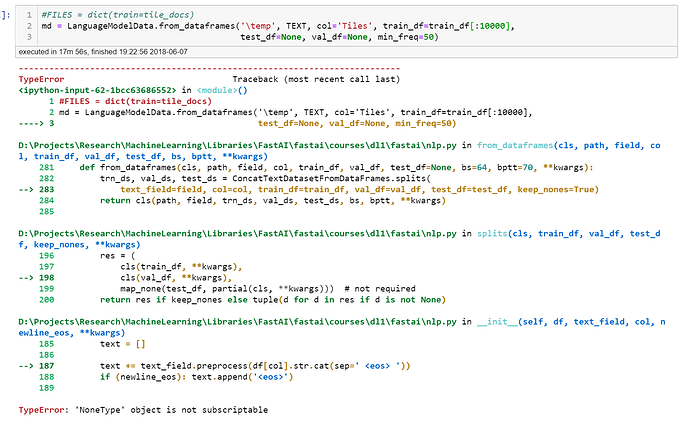

Just wanted to add that I tried on a much smaller subset of the data and I encountered a different error this time. I’m starting to think that I’m using the command in the wrong way. The image below shows what I call.

I don’t have any validation or training sets since I’m only trying to generate the language model and the word embeddings. I hope this is possible, perhaps I’ve misunderstood the whole thing?

Kind regards,

Theodore.

Try giving it val_df, same as train_df. As you said, we don’t care about validation, so it trains and validates on train set ie train_df.

val_df=train_df[…]

1 Like

Thanks @urmas.pitsi, I realized that after a while! The only error left is the memory error which is something I need to solve before running the model data command I guess.

1 Like

Aha so there is a max vocabulary! Thanks for pointing this out, I’m still new at the library and options. That should do it yes.

At the moment I am also testing the lower bs and bptt values to check. It got me further down the process but still run out of memory. Will adjust vocabulary and see what happens. Thanks!

Next steps you could try:

- Decrease model size:

- nh: 1150 to 500?

- emb sz 400 to 200 or lower. If you’re using precalculated embeddings then have to choose based on particular emb file:400,200,50 etc

- nl: 3 layers to 2?

Thanks for the advice @urmas.pitsi.

Weird thing though is that it’s not the GPU memory running out (so not training) but the actual vocabulary (which I’m guessing does not happen on GPU). I’m trying to kind of look at my RAM usage while creating the vocabulary and I can see it going pretty high at some point. Wonder if there is some way to facilitate this on bigger corpora? Is it the unique token that increases memory usage or the total number of words for example?

In any case, I’m using a max_vocabulary now of 50000 (seems to fit). I’m guessing those are the first 50000 encountered, or is it the 50000 with the highest frequency? Guessing the first, since the second would probably require to store all the information in the corpus, which would blow up memory usage. Sorry a bit of a newbie on this one.

Kind regards,

Theodore.

I would assume that max vocab keeps 50000 most frequent ones. Actually I am pretty sure it does that way.

Source reads (text.py : numericalize_tok):

freq = Counter(tokens)

int2tok = [o for o,c in freq.most_common(max_vocab) if c>min_freq]

NLP is memory killer. I just recently upgraded RAM from 16GB -> 32GB.

High RAM usage comes from big vocabulary (corpus). Especially because everything is read into dictionary (hash map). I am sure one can optimize it to make it work faster in lower RAM situations, but I quess it would just easier to add more RAM

Yeah that is so true! I just ordered my second 32gb RAM, hope it comes soon!

Thanks for the help and clarifications. I’ll check if max_vocab solves the issue then, it might not if I still need to store every token.

1 Like

actually you can modify existing loader quite easily or build your own.

Currently fast.ai loads everything into memory at once, but you don’t have to do that. You just go line-by-line or chunk-by-chunk and build your vocab-dictionary more memory efficiently that way.

‘https://www.reddit.com/r/Python/comments/3txgnj/reading_a_file_bigger_than_current_ram_memory/’

current fast.ai implementation ‘text.py’: ‘def texts_labels_from_folders(path, folders):’

texts.append(open(fname, 'r').read())

if you just replace this read() by looping then you should be fine. And this dictionary building is once-only step: after building, store it for later use.

1 Like

Thanks I should give all that a try. By the way I tried the max_vocabulary and got an error:

TypeError: init() got an unexpected keyword argument ‘max_vocabulary’

Was I not supposed to put it at the model data object?

Try this:

md = LanguageModelData.from_text_files(PATH, TEXT, **FILES, bs=bs, bptt=bptt, min_freq=???, max_size=???)

you can check the length of vocab by:

len(TEXT.vocab)

sorry, I got you a bit confused here! The thing is that lesson-4 uses ‘nlp.py’ that is deprecated and current version is ‘text.py’ (look: …fastai/courses/dl2/imdb.ipynb). I was talking with current version in mind, but it works old way also. I just double checked lesson-4 to be sure.

1 Like