If you make it a separate field in your CSV, then it’ll automatically be tagged for you when you import it.

Hi @Jeremy

Thanks, but I don’t quite follow. I was thinking along the lines that the title content should probably hold more weight than the body of the abstract as it’s the abstract of the abstract if you will…

If my goal later is to do topic modeling, would it help to mark the title content (that I currently have in a separate column and merge with the body text col for a joined col) as more important for the model?

If I do, would that be at the language model learning step, or rather at the classification trainman step…

Sorry if I ask the wrong questions - pretty new to NLP…

Put your title and doc in different fields in a CSV. Then it’ll be tagged by the dataset automatically. If the model finds that tag is more important, it’ll use it automatically.

Oh wow. That’s cool. Have to read up into the docs a bit more it seems

https://github.com/fastai/fastai/blob/master/examples/tabular.ipynb

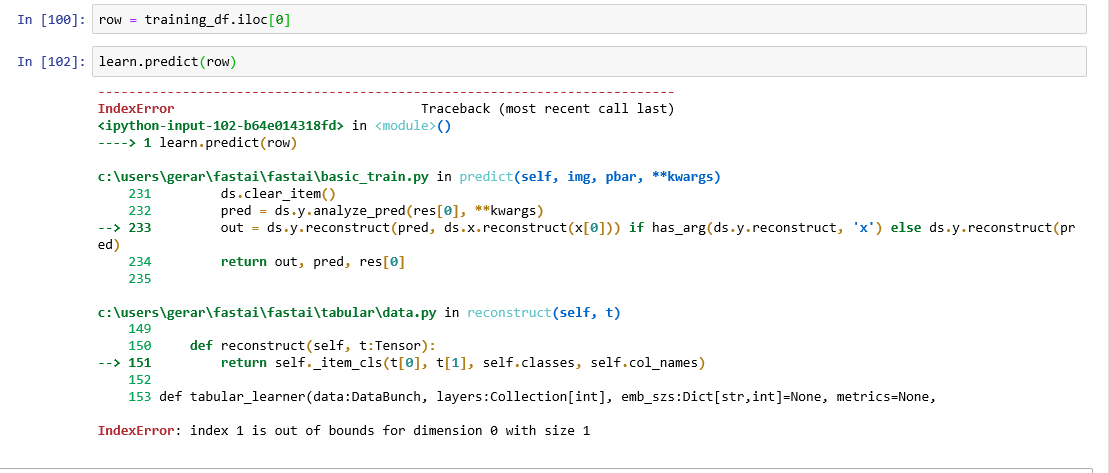

Looks like there’s something wrong with the example

On the section with the prediction of the tabular data I’m getting an error.

You can set the batch size as follows (it’s in the updated course-v3)

bs =24

data_lm = TextLMDataBunch.load(path, 'tmp_lm', bs=bs)

You can specify the settings of max_vocab and the language as follows.

txt_proc = [

TokenizeProcessor(tokenizer=Tokenizer(lang='nl') ),

NumericalizeProcessor(min_freq=1, max_vocab=10000 )

]

data_lm = (TextList.from_df(df, cols='text', processor=txt_proc)

.random_split_by_pct(0.1)

.label_for_lm()

.databunch())When we want to train the language model for our own dataset on the wiki103 LM. Why don’t we have to align the vocab of our new dataset (IMDB) with the Wiki103? For example like this.

data_lm = (TextList.from_folder(path, **vocab=data_lm.vocab**)

Like we do when we want to use the Classifier.

data_clas = (TextList.from_folder(path, vocab=data_lm.vocab)

Thanks! This is great.

@mb4310 I’m curious. Do you ever run these as an ensemble?

Hi,

Is there a way to use the collaborative filtering approach with additional metadata?

Let’s say I have the exact same dataset as discussed in the class. But additionally I have metadata for both movies (e.g genre, release date, … ) and users (eg. age, occupation …). How can I create I NN that uses a collaborative filtering approach but also takes in account metadata???

of course this works. You probably have to write your own Items and ItemLists as defined in tutorial 3 (see docs) and them maybe define forward / collate functions. Should be pretty straight forward to extend

I am having trouble understanding how this would work though. How would the Matrix Factorization process look like?

I assume you have meta data that is only based on the interactions? Like lets say the distance between the main site of the movie’s plot and the home address of the user? Then you would need to hook into the model after the factorization has taken place and concatenate your additional dimensions I would assume. But I haven’t done this before, so maybe someone more experienced could help here  But since you can basically add layers wherever you want at the pytorch level (by overriding some fastai things) I assume this shouldn’t be too hard. If your metadata is only related to one of the items, then you can just append it before factorization and run everything like you normally would.

But since you can basically add layers wherever you want at the pytorch level (by overriding some fastai things) I assume this shouldn’t be too hard. If your metadata is only related to one of the items, then you can just append it before factorization and run everything like you normally would.

I do not have metadata for the interaction. Sorry if I use the term metadata incorrectly here.

What I mean is information like age, occupation, nationality for the user and information like genre, budget, oscars won for movies.

The idea of concatenating this features with the embedding vector sounds interesting. But I cannot wrap my head around how exactly this could be done. Thanks for the response!

Hey guys, how do I use ULMFit for text similarity?

Is there a straightforward way to create a TextDataBunch for a paired text corpus? Where the model inputs are two different text strings.

yes, see 3rd tutorial on custom items and itemlists

Okay, so what you need to do (imho) is have a look at the code of collab data bunch and see how items are encoded. If each item is only encoded as a number, you need to create custom items and item lists (see 3rd tutorial) that accept vectors. These vectors describe your item in vector space. (If I understood things correctly as a by-product you sort of get latent factors that you could use to compute item similarity if you’re at all interested in that). So then it wouldn’t be: User 1 likes item number 4, but rather User(x1, x2, x3) likes item(z1, z2, z3) where x and z are features of users / items. Is this clear at all?

Thanks for following along my thought process and pointing out some interesting ideas.

But I can not see how something like this could work in a neural net with only one layer. All we do is multiply 2 vectors of latent factors (embeddings) to get a single number (the rating) as a result. The values of those 2 vectors is what the NN learns.

So is your idea to concatenate the embeddings with another vector containing weights for the metadata features?

Yes, exactly.