Hi all,

I am running the Lesson - 3 - BIWI Head Pose notebook.

I noticed that the notebook suddenly ends after the creation of the augmented dataset. When I tried to use this dataset to further train my model, I am getting an error.

Here is my code:

learn2 = cnn_learner(data, models.resnet34)

learn2.lr_find()

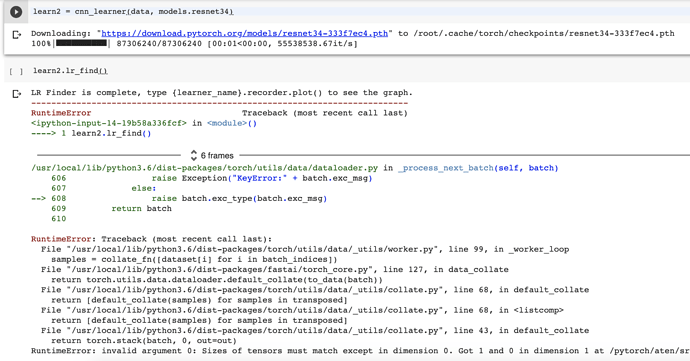

It is while running the lr_find() function when I am getting the following error:

LR Finder is complete, type {learner_name}.recorder.plot() to see the graph.

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-14-19b58a336fcf> in <module>()

----> 1 learn2.lr_find()

6 frames

/usr/local/lib/python3.6/dist-packages/torch/utils/data/dataloader.py in _process_next_batch(self, batch)

606 raise Exception("KeyError:" + batch.exc_msg)

607 else:

--> 608 raise batch.exc_type(batch.exc_msg)

609 return batch

610

RuntimeError: Traceback (most recent call last):

File "/usr/local/lib/python3.6/dist-packages/torch/utils/data/_utils/worker.py", line 99, in _worker_loop

samples = collate_fn([dataset[i] for i in batch_indices])

File "/usr/local/lib/python3.6/dist-packages/fastai/torch_core.py", line 127, in data_collate

return torch.utils.data.dataloader.default_collate(to_data(batch))

File "/usr/local/lib/python3.6/dist-packages/torch/utils/data/_utils/collate.py", line 68, in default_collate

return [default_collate(samples) for samples in transposed]

File "/usr/local/lib/python3.6/dist-packages/torch/utils/data/_utils/collate.py", line 68, in <listcomp>

return [default_collate(samples) for samples in transposed]

File "/usr/local/lib/python3.6/dist-packages/torch/utils/data/_utils/collate.py", line 43, in default_collate

return torch.stack(batch, 0, out=out)

RuntimeError: invalid argument 0: Sizes of tensors must match except in dimension 0. Got 1 and 0 in dimension 1 at /pytorch/aten/src/TH/generic/THTensor.cpp:711

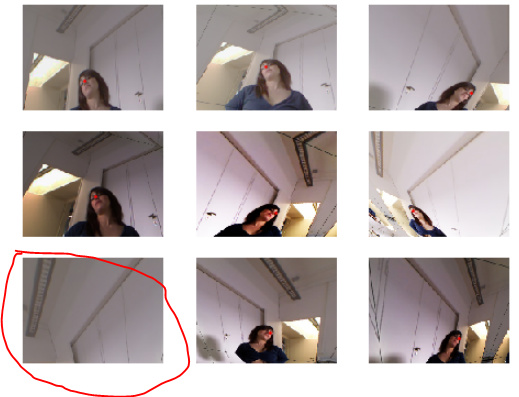

I have checked the dataset creation function and the only difference between this function and the previous one is that we are using a different custom transformation function.

The original dataset code snippet:

data = (PointsItemList.from_folder(path)

.split_by_valid_func(lambda o: o.parent.name=='13')

.label_from_func(get_ctr)

.transform(get_transforms(), tfm_y=True, size=(120,160))

.databunch().normalize(imagenet_stats)

)

The later dataset creation code snippet which is giving error:

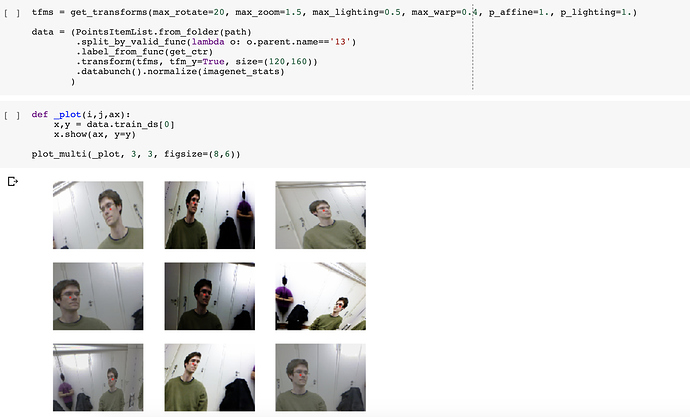

tfms = get_transforms(max_rotate=20, max_zoom=1.5, max_lighting=0.5, max_warp=0.4, p_affine=1., p_lighting=1.)

data = (PointsItemList.from_folder(path)

.split_by_valid_func(lambda o: o.parent.name=='13')

.label_from_func(get_ctr)

.transform(tfms, tfm_y=True, size=(120,160))

.databunch().normalize(imagenet_stats)

)

If someone has already faced this issue before, please reply your solutions in this thread.