Dear All,

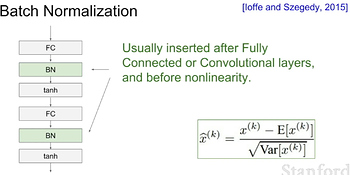

While watching stanford course on convolutional neural networks, i noticed that they recommend to use batch normalization after the conv or fc layers but before the nonlinearity

When i get back to the code used for vgg16bn.py

i found that the fcblock defines the batch normalization after the nonlinearity

def FCBlock(self):

model = self.model

model.add(Dense(4096, activation='relu'))

model.add(BatchNormalization())

model.add(Dropout(0.5))

i googled a little bit, and found that there’s a debate around this point, so that’s why i’m asking why had you preferred puting it after the non linearity unlike what the paper says

Regards,

Omar