Oh I missed requirements.txt.

What should this file contain?

I have fastai == 2.5.2 only in this file. Anything else needed?

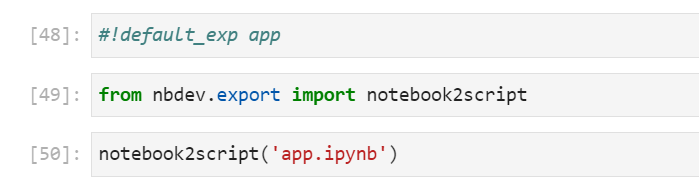

It works now. All I had to do is update the Jupyterlab version from 2.3 to 3.4. Starting from version 3 ipywidgets are supported in Jupyterlab ![]() .

.

@kurianbenoy Now both the widgets and collapsible headings work from Jupyterlab itself.

Wow, that’s great. Thanks for updating ![]()

Thanks to @kurianbenoy, @suvash, @brismith, @mike.moloch, and @tapashettisr for your guesses!!! I’m really grateful to be part of such an engaged and thoughtful community.

My own guess was probably closest to (a) originally–I imagined that the things that the learner had picked up to indicate dogs and snakes would be absent in houses, and therefore those two classes would be relatively suppressed. I find @suvash’s suggestion most compelling: that one of the easiest and most beneficial heuristics for the model to learn was effectively “Never guess ‘OTHER’!”

In the end, I am delighted to share the results of the experiment (run in Colab), which converged–but not on any of the exact options I provided!

I ran fine_tune(20), and here are the first few rows to show that the loss and error rate decrease over training.

| epoch | train_loss | valid_loss | error_rate | time |

|---|---|---|---|---|

| 0 | 1.520274 | 1.346103 | 0.547945 | 00:21 |

| epoch | train_loss | valid_loss | error_rate | time |

| 0 | 0.339380 | 0.362611 | 0.123288 | 00:21 |

| 1 | 0.287089 | 0.089451 | 0.027397 | 00:20 |

| 2 | 0.198841 | 0.015010 | 0.000000 | 00:20 |

| 3 | 0.146187 | 0.003257 | 0.000000 | 00:21 |

| 4 | 0.112802 | 0.001330 | 0.000000 | 00:21 |

| 5 | 0.090765 | 0.000901 | 0.000000 | 00:21 |

I then tested this trained model on 200 images of houses (thanks Duck Duck Go!), and took the mean of the 200 prediction tensors to see what category [“OTHER”, “Dog”, “Snake”] the inferences tended to:

| OTHER | Dog | Snake |

|---|---|---|

| 1.14% | 80.50% | 18.35% |

So I think that, in the end, the prize has to go to @brismith for best answer (though points to anyone who guessed something like ‘c’)!

In the spirit of his answer, I’ll note, that in retrospect it seems like there may be significantly more overlap between dogs and houses than between snakes and houses, by nature of where these animals are most often photographed. This seems like a great example of unexpected bias in the dataset. Perhaps it would have been most prudent to test on 200 images of grayscale static instead?

Finally, I’m curious if anyone can come up with a solution that accomplishes the goal of: dog images getting correctly labeled, snakes getting correctly labeled, but every other type of image getting labeled “other”, whether it is a picture of an airplane, a shark, a Jackson Pollock, or white noise?

Is it impossible?

Thanks again everyone!

And a quick update, fine_tune(20) on 200 ‘static noise’ images:

| OTHER | Dog | Snake |

|---|---|---|

| 0.65% | 8.88% | 90.47% |

So, you can color me confused! I did not expect to see such a drastic asymmetry between Dog and Snake. What’s your best guess? Is it that perhaps the training dataset contains a black and white picture of a snake, but none of a dog? Or is it that snakes are photographed in noisier environments? I realize this is hard for anyone to guess without access to the specific photos I downloaded from DDG, but just wanted to share.

Maybe use a multi category classifier (which we will get to at some point) and look for either dogs and/or snakes, and this should be more sure that a house (or static noise) is neither. I accept the prize, as long as it isn’t a snake ![]() .

.

This is the way ![]()

Hi Jona, Its interesting to see results of actual experients. Thanks for sharing. I’ll file along with everything else I’m learning.

Maybe try with test image from search like: dog in woods or dog in park

definitely favor using lab currently. I dont get confused with mixing nb tabs and regular tabs where i look up stuff like SO or documentation. Also like the some of the better looking dark theme extensions ![]()

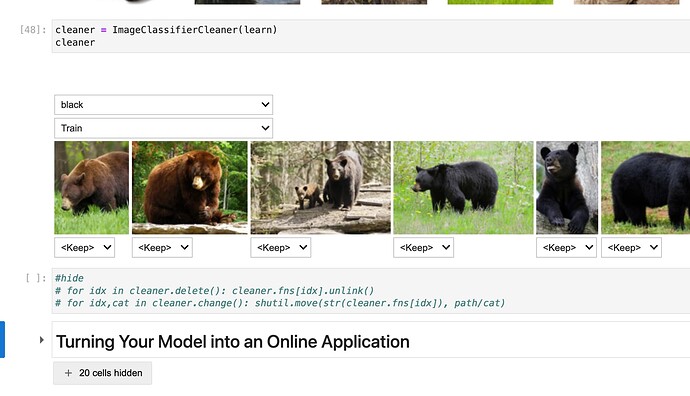

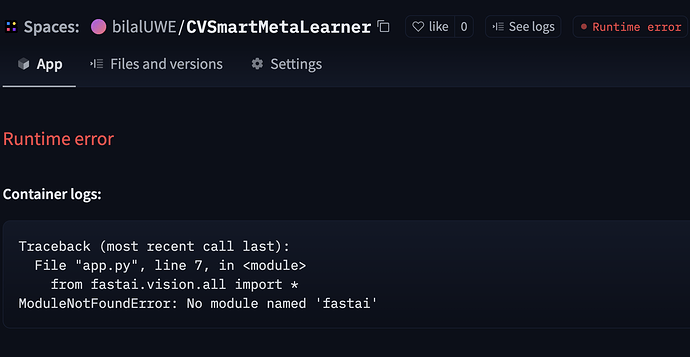

Hi, I’ve created gradio app that runs perfectly on local computer. I pushed it to the huggingface spaces and was expecting the same result. But the app is now giving me the following runtime error:

I was expecting FastAI shall be installed automatically. Any idea what might have gone wrong?

Hi @bilalUWE , you may need requirements.txt with these lines in it:

fastai

scikit-image

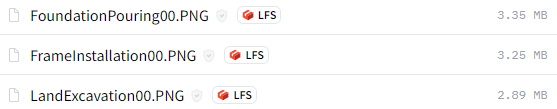

Hi @mike.moloch thanks. It worked. So my construction stage detector is up and running now at the following. link: Cvsmart Meta - a Hugging Face Space by bilalUWE

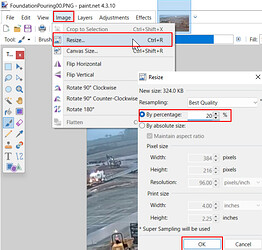

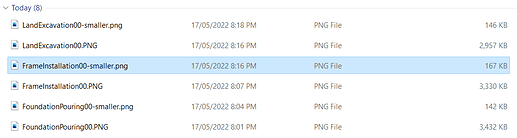

Nice application. However the images each take about 150-seconds to classify.

I believe because of the large size of your 3MB sample images…

Using desktop app Paint.net I resized your example pics to 20% of original pixels, down to ~150kB, and the classifer time dropped to 3-seconds, while giving the same results.

Thanks @bencoman for describing the process to reduce the image size. This is much appreciated. Glad you liked the app.

I’ve updated fastai now to surface the base parameter in FastDownload, in case you need it. You can also set archive and/or data to an absolute path to download/extract into that path. I’ve also updated the docs to remove that outdated docstring.

Let me know if you hit any issues.

FYI you can also use the resize_images function in fastai to do this in one line of code:

Awesome! I’ll test this out.

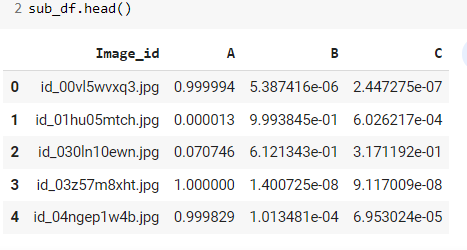

I am trying to solve a multilabel classification image classification problem. The evaluation metric for the challenge is Log Loss.

Any idea how to pass the metrics as logloss instead of error_rate.

my final submission file is shown below and I have to put my predictions this way.

It seems like val_loss and cross entropy loss metric gives same values. I guess here for multiclass problem . The learner will automatically take crossentropyloss as validation loss reporting.