That makes sense! I did try printing out what zip returned, and it just told me that it was a zip object

Thank you for a great lesson yesterday!

That makes sense! I did try printing out what zip returned, and it just told me that it was a zip object

Thank you for a great lesson yesterday!

@jeremy will the Dog Breeds Walkthrough also be setup on AWS so one can step thru it?

When center cropping an image (see red boarder), we may loss the important details (head and paws in this case). Should we use image re-sizing from rectangular to square instead?

We’ve had this question a few times already - please do a ‘search’ on the forum before posting. This error means that you’re not using python 3.6.

Excellent question. This type of resizing is what keras does by default. I’ve found that it seems to generally work less well, since it has to learn how images look different depending on how they’re squeezed. But we do have the ability to use this squeezing approach in fastai - maybe @yinterian could show an example?

No, because it’s an active competition, so I’m not allowed to under kaggle rules. But replicating it yourself would be a great exercise.

This is going to depend on the problem. For many problems center cropping will be fine for other problems you may want to resize. You can do both with the fast.ai library.

Curious if anyone has tried ResNet style architecture for language models. Would be interesting to see what kind of features initial layers would capture and how starting with smaller sequences and retraining on larger ones (like how we did in class with starting with smaller size images and changing to larger ones) would affect the model.

A recent architecture called ‘Transformer’ uses a ResNet style block for NLP. It worked really well.

In the lecture, Jeremy says that we are using Adam and the fastai library is trying to find an optimal learning rate given this setting.

Would it be possible to support image sizes that aren’t squares? For now it looks like sz only accepts one integer value which it then turns into a tuple for resizing.

Statement: In the lecture, Jeremy says that precompute = True will take time 1st time and then utilize these precomputed activations in future (Assuming AWS).

In part 1 v1 , we used one hot encoding for transforming the RGB values. In this version(v2) we haven’t, is this related to Resnet architecture.

Statement: In the lecture Jeremy says that the technique SGDR to come out of local minima?

If I run learn.lr_find() after training for a bit does it affect my trained weights or its run independently?

What does ps do?

learn = ConvLearner.pretrained(arch, data, precompute=True, ps=0.5)

Checked the code and it says ps is dropout parameters?

But no idea what that means.

Thats right. Dropout is a hyper parameter to control overfilling by dropping random % of nodes in a layer.

Ok. Thanks… And how do I notice overfitting, so that I can use the ‘ps’ hyper parameter?

When your train score is better then validation score.

How does @jeremy know that he has to pass the .jpg suffix to the file names in the dog breed comp?

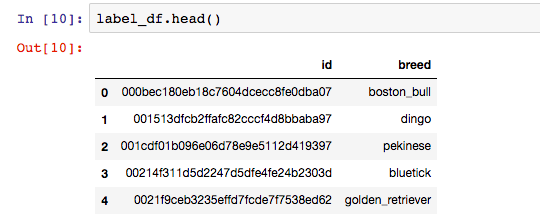

Looking at the data with label_df.head does just shows us the file name.

So wondering how he knew that .jpg was requried?