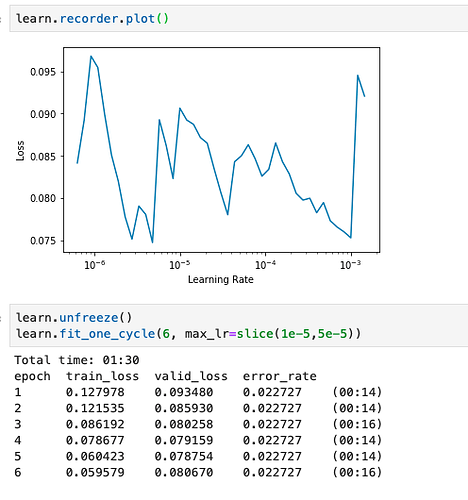

I’m getting the following plot after running learn.lr_find(). I’ve chosen the lr range that corresponds to the middle drop. I can see that the valid_loss is beginning to increase on the 6th epoch, but what can be the reason for a constant error_rate? Let me know if more context is required.

P.S. This is the case when lr is in range (1e-6,4e-6) too.

Updating the fastai library fixed it

answered here ![]()

Hope this helps

Yes. All non apple and non orange images in training set to be classified as Other.

No it’s not a random direction, but the direction of the gradients. The stochastic refers to the fact we draw batches randomly.

Rename then with the most correct labels from a human perspective.

Michael, Have you seen this ?

As Andrew Ng has said, it is like worrying about over population on Mars.

I removed that one since it’s not the approach we recommend.

Please read the etiquette guide in the FAQ.

I’m facing the same issue and agree that replacing valid_ds with train_ds is not ok. Looking at the code of the ClassificationInterpretation class, it seems that it only works with the validation set. I guess would be great that it would receive a parameter to select the dataset.

This may help.

That’s OK. Autoencoders are too noisy anyway, which means the NN learns the quirks of the autoencoder, rather than what really makes an image a member of a class.

In the video, Jeremy has explained this very clearly

Thanks I’ll be sure to check it out. I am using the same dataset so this could be really helpful!

@miwojc has kindly shared this Jeremy paper on another forum. It may help.

Sorry. I did not see that you had already shared the same resource. Thanks.