Then your training loss will increase, but your validation loss will decrease, which is what we’re looking for. Your validation loss will still be higher than your training loss. There are cases when your training loss is lower than your validation loss. But it’s not necessarily due to using dropout. It could be due to other factors which I’m sure someone else can explain better.

What happens is that your model becomes more generalizable to unseen data, so the validation error should decrease, but it will still be greater than the training error.

In the example of a bear classification, a teddy bear image with lots of texts was not deleted when cleaning up the data set. Say if there are many images like this in a training set but not in a test set, would you recommend to crop the images with texts to train?

I think it’s ok to randomly split data if the data has no intrinsic serial ordering. In other words, the label of a given data point does not depend on the labels of data points that come before it in the series.

If this is incorrect, perhaps someone knowledgeable on the subject such as @Rachel could weigh in with a better answer?

The y-axis in the plot is the loss function, i.e. the cost function we are trying to minimize in stochastic gradient descent.

This depends on the training data and class distribution. For example, in the kaggle carvana competition there were images of cars from multiple angles. So in this case you wouldn’t want to have the same car showing up in both the training & validation set. This can also be an issue with medical data if there are multiple images in the dataset belonging to the same patient, then the training and validation sets should (ideally) not contain images from the same patient.

The general rule of thumb is that you want your validation set to be as good a representation as possible of a test set (unlabeled data that the model hasn’t seen). So if your validation set contains images that your model has already seen then you aren’t really getting a good idea of how good your model will generalize to unseen/unlabeled data.

To be clear, though, ultimately you should run tests and empirically check the results. Whatever works best in practice isn’t always what you might have expected! Run lots of experiments and have fun with it ![]()

Here’s the paper that @jeremy mentioned that discusses methods for dealing with class imbalance in convolutional neural networks:

A systematic study of the class imbalance problem in convolutional neural networks

Wow, the FileDeleter is such a cool concept. There are still some features I would love to see, but it is a great start. It’s so cool that it runs inside of Jupyter as its own application.

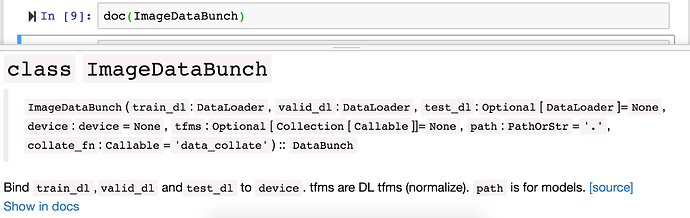

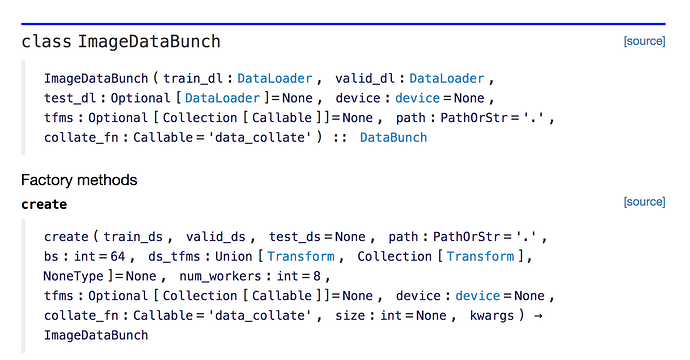

you can search using doc(ImagaeDataBunch) in the notebook.

Click on show in docs button to pop up the documentation page which looks like this.

doc() function supports all the functions and classes as long as you have imported all.

from fastai import *

The Deleter widget is really handy, however it does not show the actually or predicted labels, so that you won’t be able to immediately be sure about if you should delete the data

In whatever function is giving you the error, you want to transform the pytorch tensor into a numpy array before calling the function. But, depending on the tensor, you might need to detach() it first. For example:

instead of

plt.scatter(x[:,0, x@a)

do this

plt.scatter(x[:,0],(x@a).detach().numpy());

get_image_files(validatior_dir)

should do trick

you can decrease the percentage if you have really big dataset. As long as you have a couple of hundreds of samples/class in the validation set you should generally be ok, and increasing the training set might be more beneficial.

Quite a few of them seem to be for inference on the edge; on embedded platform. A la movidius.

Good find! Want to add it to the lesson 2 wiki topic?

Done. I found another paper that shows how a variational autoencoder can be used to generate synthetic examples from the minority class, similar to the SMOTE and ADASYN techniques used in traditional ML. Added it as well.

What’s happening here? Everytime learn.fit_once_cycle(4) is called, expectance of particular size of tensor is changing everytime. Moreover Isn’t create_cnn supposed to handle this from data ? I’m on v1.0.18 @sgugger

1

2

3

4

5

Maybe I’m wrong bit is it not truth. That SGD moving in one step in random direction?

M

Hi,

After running this in the terminal gcloud compute ssh --zone=ZONE jupyter@INSTANCE_NAME -- -L 8080:localhost:8080

I couldn’t find course-v3 folder. I think i’m doing something wrong, Can anyone please guide me.

![]()

Thanks.

Solved.