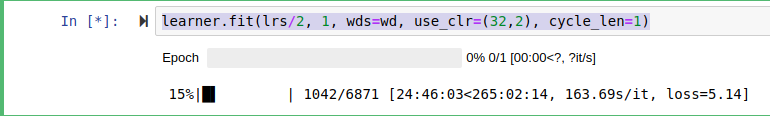

I’m working on translate.ipynb notebook and stuck in first training epoch learner.fit(lrs/2, 1, wds=wd, use_clr=(32,2), cycle_len=1). Feels that my model somehow got stuck on CPU instead of 1080ti:

Is it normal speed for training multilstm language model or I should look for bugs in my setup?

Thanks