Thanks a lot for the tweet @jeremy !

Very interesting! so I’ve tried 2 things (hope they include what you had in mind): the bottom line is that your idea of rescaling the whole seems to work amazingly. The rescale factor I wrote previously might also help, but that’s less obvious ![]() .

.

I’ll try to do more robust experiments with all this, and see if we can prove more robustly that those rescaling factors help.

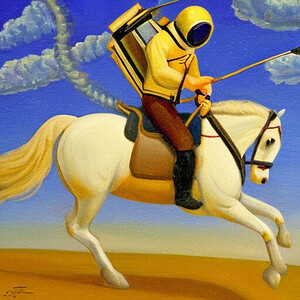

1. regular guidance (7.5) followed by rescale to match the original t

1.a Reminder: original images

pred = u + g*(t-u)

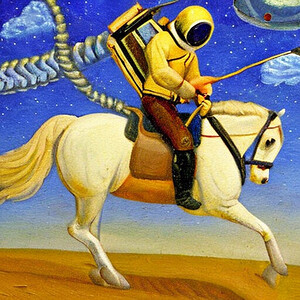

1.b With the “whole” rescaling

pred_nonscaled= u + g*(t-u)

pred = pred * torch.norm(u)/torch.norm(pred_nonscaled)

→ seems to add a lot of details, without changing the picture!!

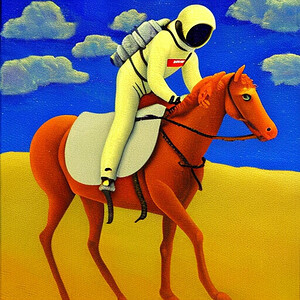

2. rescaled guidance update (0.15) followed by rescale to match the original t

2.a Reminder: original images

pred_nonscaled= u + g*(t-u)/torch.norm(t-u)*torch.norm(u)

(note the rider’s foot missing on the right picture)

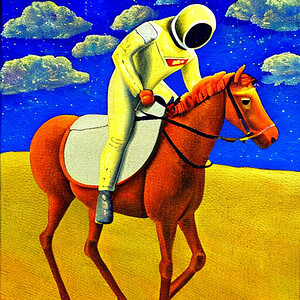

2.b With the “whole” rescaling

pred_nonscaled= u + g*(t-u)/torch.norm(t-u)*torch.norm(u)

pred = pred_nonscaled * torch.norm(u)/torch.norm(pred_nonscaled)

The whole term rescaling definitely seems to help here too! Notably, it fixed the foot artifact observed previously