I am a bit puzzled by the use of detached / with torch.no_grad while computing the Bn statistics .

As far as I understand it means the statics calculation is not a part of the backprop (?) , this contradicts what I remember from BN .

in addition in layerNorm or InstanceNorm detached / with torch.no_grad is not used, why is that?

Thanks

Nadav

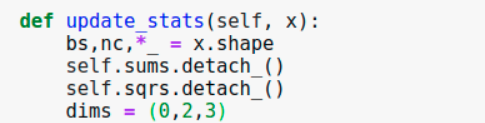

the simple BN:

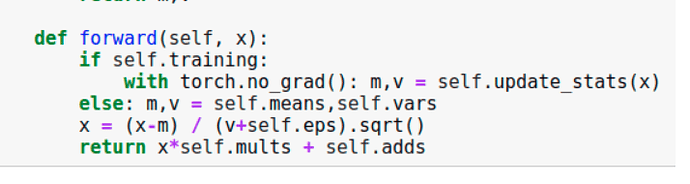

the running RunningBatchNorm :