Some questions

-

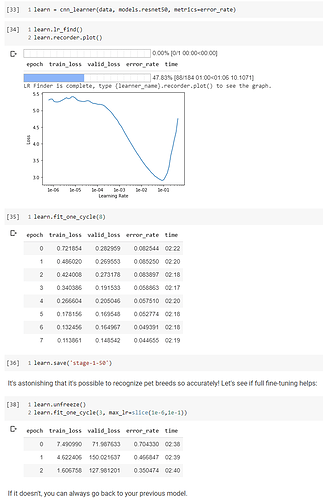

are these the normal steps?

i.e lr_find, then fit_one_cycle , unfreeze then retrain based on the max_lr parameter?

-

did I use the slice correctly?

3)the reason is not better than 0.04 is it because it requires more epoch as 3 only brings down to 0.35.

- Is it usually quite difficult to beat the baseline of 0.04 and is it ok to export this model if this is the better one?

Thanks.

This is what I found unusual in your code:

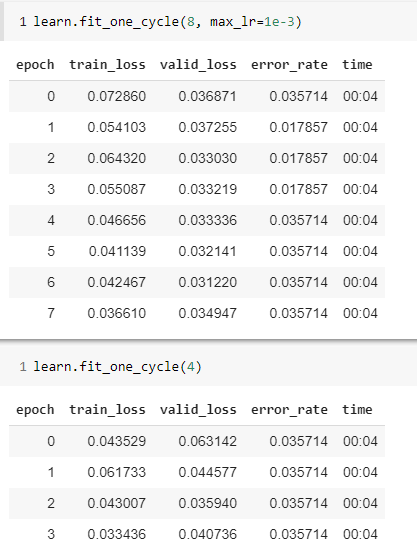

You do not set a learning rate in your 1st training cycle [35]. Try this instead, for example

learn.fit_one_cycle(8, max_lr=1e-3)

After this, you should again use lr_find to get a new learning rate for your 2nd cycle.

1 Like

Apologies, I do not understand what you meant by not setting a learning rate at [35]. I don’t think I did, I only set the LR at [38].

Yes Andrew, you did not set a LR, but I recommend that you do set it perhaps using my code example. This is what Jeremy does almost all the time.

Hi @Archaeologist, thanks for the prompt reply.

max_lr=1e-3

Does it mean that it will be using LR of 1e-3 consistently from start to the end?

I’m currently at Lesson 2 and Jeremy didn’t use it as of now. Is it something that is practised later on?

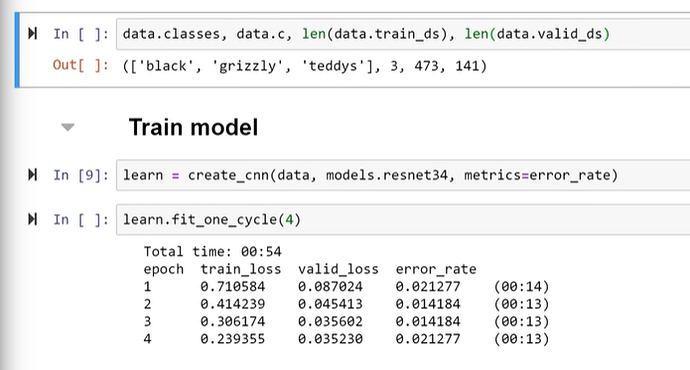

Screenshot from Lesson 2, Jeremy’s lecture

I run this at the lesson 2 notebook

Seems like all roads leads to Rome.

Cheers.

OK it has been a while ago that I did lesson 2…

To clarify: fit_one_cycle() always has a maximum learning rate (max_lr), if you do not set it, it will use the default, which happens to be 1e-3  I did not know this but in your (and Jeremy’s) example it just happens to be the right value to pick.

I did not know this but in your (and Jeremy’s) example it just happens to be the right value to pick.

The 2nd issue I mentioned is that you do not use the learning rate finder again when you do your 2nd training. A neural network modifies its internal state while training. So in your initial example (where you use the lr_find() only once at the beginning) the 1e-3 is right for your first training, but when you use learn_fit_one_cycle() again, you should call lr_find() beforehand for an estimate of a new max. learning rate.

Jeremy has a good explanation on learning rates in lesson 3 or 4 if I remember correctly.

To come back to your initial question:

- are these the normal steps?

i.e lr_find, then fit_one_cycle , unfreeze then retrain based on a NEW max_lr parameter obtained with a second lr_find()

- did I use the slice correctly? looks good but I cannot say because it is unclear where you found the learning rates you use?