Hi there,

I don’t know if this is really the right place to ask this or not.

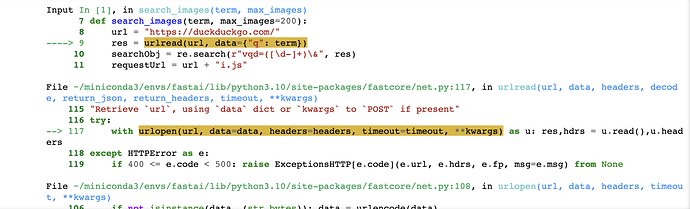

I’m having issues getting the search_images or search_images_ddg working locally.

I’ve even created a separate notebook to test just the search_images_ddg function and this doesn’t work either. The issues appears to be with the urlread line.

I’m running a Mac M1, 32GB, Ventura 13.0 with the Conda setup described here

Any help would be really appreciated as I can’t follow along with the exercises right now…it’s been a few hours of trying to get this working and no luck ![]()

Thanks a lot !