Fit_one_cyle needs a maximum learning rate, and the best way to find it is the lr_finder Jeremy is talking about.

I remembered from version 2 (or version 1?) of the course that Jeremy said something like: updating batchnorm while freezing other layers causes the model to fail apart. So it turns out to be the good practice now?

I am interested!

does it matter if you use lr_find with freeze or unfreeze ?

does lr_find specific to optimizer?

What would the improvement have been with just one more fit_one_cycle with the same learning rate?

yes, but it can be applied to a lot of optimizer, even the adaptive ones

Was that error rate on the training set?

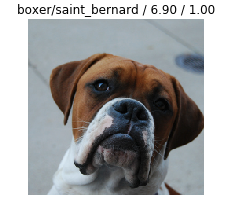

I still don’t understand why there’s a 1.00 on the top of that picture. It’s supposed to be the probability of the actual class, so it makes no sense to be at 1.00…

Is the workflow described by @sgugger in the previous one cycle policy still available in the new version? (I’m thinking of the manual definition of each parts of the cycle).

The unfreeze function lets you train the layers of your pretrained that were initially frozen. In the sense we weren’t changing those layers at all. Unfreezing lets you finetune your model more.

Q: Does learning rate affect “accuracy” or just the time of training?

Will the error rate improve with higher epochs? If it degrades, which model is saved when we use the learn.save() function?

Does learning rate change during training process to draw this graph, or it trains with multiple learning rates in parallel?

The speed at which your algorithm “learns”

bs=34 will work for 8GB

Welcome to deep learning ;). Your model is always going to be very certain of its answer. So yes it’ll say 1.00 probability for one class. It’s not a real probability, remember that.

great. let’s try to start a group.

Sure but it’s not the probability of the answer but of the actual class, isn’t it ?