I’m trying to fine-tune a resnet50 model for a classification problem. The code I use is pretty simple (copy-pasted from tutorials), the only addition is that I start from greyscale images (see code below). Anyway, everything seems to work as expected (I can dls.show_batch(), learn.fit_one_cycle(), learn.predict(), … ), but if I try to run learn.summary() I get IndexError: list index out of range.

I guessed it was for the greyscale transformation, however it persists even if I discard the greyscale conversion or if I specify cnn_learner(...., n_in=1, ...).

Do you have any advice?

Thanks in advance

code:

import fastai

from fastai.vision.all import *

from albumentations.augmentations.transforms import ToGray

# Using versions:

# torch: 1.8.0

# fastai: 2.4

# hyperparams

# augmentation

resize1 = 512

resize2 = 224

max_lighting, p_lighting = 0.1, 0.5

min_zoom, max_zoom = 0.9, 1.1

max_warp, max_rotate = 0, 15.0

# dataloader

bs = 32

class AlbumentationsToGray(Transform):

def __init__(self, aug): self.aug = aug

def encodes(self, img: PILImage):

aug_img = self.aug(image=np.array(img))['image']

# ToGray returns 3 channels with same grey image repeated,

# this causes conflicts with model training:

# [b x (3 chan x image size) VS (b x 3 classes) x 1]

aug_img = PILImage.create(aug_img[:, :, 0], mode='L')

return aug_img

# setup augmentation pipeline

RGB2Grey = AlbumentationsToGray(ToGray(p=1))

tfms = [

# IntToFloatTensor(div_mask=255), # need masks in [0, 1] format

*aug_transforms(

size=resize2,

max_lighting=max_lighting, p_lighting=p_lighting,

min_zoom=min_zoom, max_zoom=max_zoom,

max_warp=max_warp, max_rotate=max_rotate)

]

# initialize dataloader

dls = ImageDataLoaders.from_df(selfsuper_df, DATA_PATH, valid_col='is_valid', label_col='label',

item_tfms=[Resize(resize1)], batch_tfms=tfms,

bs=bs)

# hyperparams

freezed_epochs = 6

loss = CrossEntropyLossFlat()

metrics = [error_rate, accuracy]

early_stopping_patience = 10

learn = cnn_learner(dls, resnet50,

loss_func=loss,

metrics=metrics,

cbs=EarlyStoppingCallback(

monitor='accuracy', patience=early_stopping_patience),

# n_in=1,

)

learn.summary()

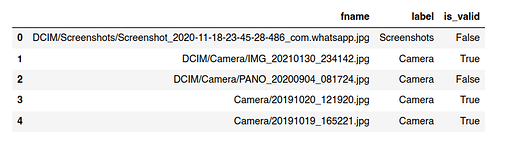

where selfsuper_df is a dataframe like this:

stack-trace:

---------------------------------------------------------------------------

IndexError Traceback (most recent call last)

<ipython-input-34-bc39e9e85f86> in <module>

----> 1 learn.summary()

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/callback/hook.py in summary(self)

203 "Print a summary of the model, optimizer and loss function."

204 xb = self.dls.train.one_batch()[:self.dls.train.n_inp]

--> 205 res = module_summary(self, *xb)

206 res += f"Optimizer used: {self.opt_func}\nLoss function: {self.loss_func}\n\n"

207 if self.opt is not None:

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/callback/hook.py in module_summary(learn, *xb)

171 # thus are not counted inside the summary

172 #TODO: find a way to have them counted in param number somehow

--> 173 infos = layer_info(learn, *xb)

174 n,bs = 76,find_bs(xb)

175 inp_sz = _print_shapes(apply(lambda x:x.shape, xb), bs)

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/callback/hook.py in layer_info(learn, *xb)

157 train_only_cbs = [cb for cb in learn.cbs if hasattr(cb, '_only_train_loop')]

158 with learn.removed_cbs(train_only_cbs), learn.no_logging(), learn as l:

--> 159 r = l.get_preds(dl=[batch], inner=True, reorder=False)

160 return h.stored

161

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/learner.py in __exit__(self, exc_type, exc_value, tb)

223 def _end_cleanup(self): self.dl,self.xb,self.yb,self.pred,self.loss = None,(None,),(None,),None,None

224 def __enter__(self): self(_before_epoch); return self

--> 225 def __exit__(self, exc_type, exc_value, tb): self(_after_epoch)

226

227 def validation_context(self, cbs=None, inner=False):

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/learner.py in __call__(self, event_name)

139

140 def ordered_cbs(self, event): return [cb for cb in self.cbs.sorted('order') if hasattr(cb, event)]

--> 141 def __call__(self, event_name): L(event_name).map(self._call_one)

142

143 def _call_one(self, event_name):

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastcore/foundation.py in map(self, f, gen, *args, **kwargs)

152 def range(cls, a, b=None, step=None): return cls(range_of(a, b=b, step=step))

153

--> 154 def map(self, f, *args, gen=False, **kwargs): return self._new(map_ex(self, f, *args, gen=gen, **kwargs))

155 def argwhere(self, f, negate=False, **kwargs): return self._new(argwhere(self, f, negate, **kwargs))

156 def filter(self, f=noop, negate=False, gen=False, **kwargs):

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastcore/basics.py in map_ex(iterable, f, gen, *args, **kwargs)

664 res = map(g, iterable)

665 if gen: return res

--> 666 return list(res)

667

668 # Cell

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastcore/basics.py in __call__(self, *args, **kwargs)

649 if isinstance(v,_Arg): kwargs[k] = args.pop(v.i)

650 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

--> 651 return self.func(*fargs, **kwargs)

652

653 # Cell

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/learner.py in _call_one(self, event_name)

143 def _call_one(self, event_name):

144 if not hasattr(event, event_name): raise Exception(f'missing {event_name}')

--> 145 for cb in self.cbs.sorted('order'): cb(event_name)

146

147 def _bn_bias_state(self, with_bias): return norm_bias_params(self.model, with_bias).map(self.opt.state)

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/callback/core.py in __call__(self, event_name)

43 (self.run_valid and not getattr(self, 'training', False)))

44 res = None

---> 45 if self.run and _run: res = getattr(self, event_name, noop)()

46 if event_name=='after_fit': self.run=True #Reset self.run to True at each end of fit

47 return res

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/callback/tracker.py in after_epoch(self)

52 def after_epoch(self):

53 "Compare the value monitored to its best score and maybe stop training."

---> 54 super().after_epoch()

55 if self.new_best: self.wait = 0

56 else:

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastai/callback/tracker.py in after_epoch(self)

35 def after_epoch(self):

36 "Compare the last value to the best up to now"

---> 37 val = self.recorder.values[-1][self.idx]

38 if self.comp(val - self.min_delta, self.best): self.best,self.new_best = val,True

39 else: self.new_best = False

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastcore/foundation.py in __getitem__(self, idx)

109 def _xtra(self): return None

110 def _new(self, items, *args, **kwargs): return type(self)(items, *args, use_list=None, **kwargs)

--> 111 def __getitem__(self, idx): return self._get(idx) if is_indexer(idx) else L(self._get(idx), use_list=None)

112 def copy(self): return self._new(self.items.copy())

113

~/anaconda3/envs/fastai/lib/python3.9/site-packages/fastcore/foundation.py in _get(self, i)

113

114 def _get(self, i):

--> 115 if is_indexer(i) or isinstance(i,slice): return getattr(self.items,'iloc',self.items)[i]

116 i = mask2idxs(i)

117 return (self.items.iloc[list(i)] if hasattr(self.items,'iloc')

IndexError: list index out of range