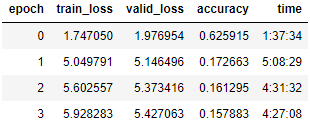

I have been training my language model with AWD_LSTM before I unfroze the model It took 22 minutes to train for 1 epoch and had 70% accuracy. After unfreezing and training the whole model the accuracy drastically decreased and the time increased as well. The most confusing part however is the training loss getting worse. I assume I am doing something extremely wrong for this result.