When submitting a Kaggle Code Competition (ex: from Lesson 4 - US Patents), the notebook that Kaggle runs during submission includes the full training process. Is that standard practice or are folks zipping their trained model → downloading → uploading to a new notebook dedicated to inference to run faster?

It’s not necessary to submit a notebook or model to Kaggle. You need to submit the predictions your model made on test data in the form of a CSV file. See the sample_submission.csv file in the data section of the competition:

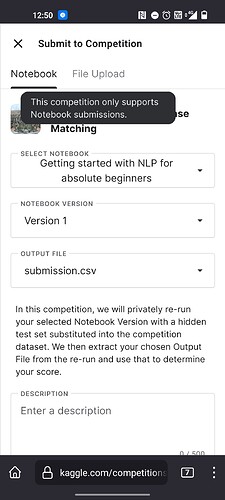

I think in this case a notebook must be submitted based on my understanding of the requirements overview:

The end of the sample notebook for lesson 4 also mentions this:

When I did a submission, Kaggle spins up a dedicated instance to run the notebook. It took ~5 minutes to complete and get a score. I assume that’s because it’s going through the full training process in the notebook, which seems wasteful to do on every submission.

I’m curious if there’s a better way to skip training in the submission context, or do folks not bother and just let it run? It seems like it would be a big issue with larger models.

It seems like you are right, I didn’t know that this competition requires a notebook too. But as it turns out, it does re-run the whole notebook and then uses the submission file you select.

Some competitions have hidden test files to avoid probing. So you submit code - including training or not depending on rules-, and “the submission file you select” is not your prediction done in advance, but the name of the file with predictions that your notebook will generate when re-run. If not forbidden, you may attach your trained model to the notebook as data source so you avoid training on the fly.