Hello folks,

Might be a newbie question but I want to get some clarity regarding the progressive resizing trick in FastAI. Below is how I’ve setup my code:

-

Data Loader Setup:

def get_x(r): return os.path.join(r["img_path"], r["Image"]) def get_y(r): return r["Id"] def get_dls(bs, size, df, get_x, get_y): dblock = DataBlock(blocks=(ImageBlock(), CategoryBlock()), get_x=get_x, get_y=get_y, splitter=ColSplitter(), item_tfms=Resize(460), batch_tfms=[*aug_transforms(size=size, do_flip=False, pad_mode='zeros', min_scale=0.75, ), Normalize.from_stats(*imagenet_stats)]) return dblock.dataloaders(df, bs=bs) -

Learner Setup

sz = 128 bs = 64 dls = get_dls(bs, sz, df, get_x, get_y) metrics = [accuracy, top_k_accuracy, map5] cbs = [WandbCallback(), SaveModelCallback(monitor="valid_loss", comp=np.less, at_end=True, ), ReduceLROnPlateau(factor=5), ] learner = cnn_learner(dls, resnet34, metrics=metrics, cbs=cbs, ) -

Stage 1 Training (with Transfer learning)

freeze_eps = 4 unfreeze_eps = 16 base_lr = 1e-2 learner.fit_one_cycle(freeze_eps, slice(base_lr)) learner.unfreeze() lrs = [base_lr / 1000, base_lr / 100, base_lr/ 10] learner.fit_one_cycle(unfreeze_eps, lrs) -

Stage 2 Training with larger resolution

bs=64 sz = 384 freeze_eps = 1 unfreeze_eps = 20 base_lr = 2e-4 # Obtained using lr_find() but I removed that after the first run to save time learner.dls = get_dls(bs, sz, df, get_x, get_y) learner.fine_tune(unfreeze_eps, base_lr, freeze_eps)

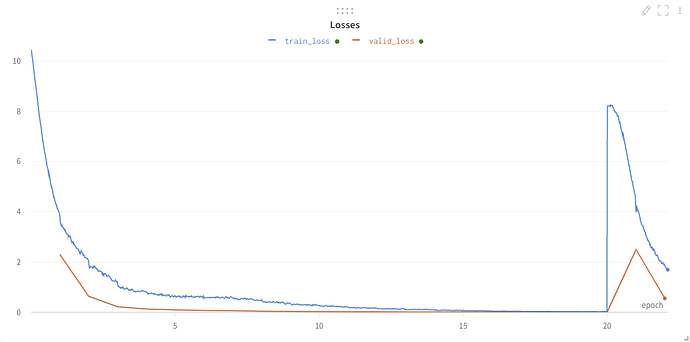

Is this the correct way to set it up? I followed the guidelines in FastBook to put this together. What I’m observing is a huge spike in the loss after Stage 1 ends and the results are actually worse than when not using progressive resizing. See the screen shot below to understand what I’m talking about:

In Fastbook, I noticed that when the new size was introduced, the learner’s loss continued to decrease from where it left off in the first stage. I’d really appreciate some insight into what can possibly be off here. Note: I have tried using learner.load("model") after the first stage ends, and I see the same behavior happening.