Hi,

Following this example: fastai/train_imdbclassifier.py at master · fastai/fastai · GitHub

I wanted to do a ULMFiT approach: Transfer learning in text | fastai

training of language model on multiple GPUs.

But When I replace the text classifier learner with the Language model learner. It does not work. I get the error IndexError, “File “/home/$username/.local/lib/python3.8/site-packages/fastai/text/data.py”, line 104, in create_item if seq>=self.n: raise IndexError”

Below is the code that I’m trying:

from fastai.basics import *

from fastai.callback.all import *

from fastai.distributed import *

from fastprogress import fastprogress

from fastai.callback.mixup import *

from fastcore.script import *

from fastai.text.all import *

torch.backends.cudnn.benchmark = True

fastprogress.MAX_COLS = 80

def pr(s):

if rank_distrib()==0: print(s)

@call_parse

def main(

lr: Param("base Learning rate", float)=1e-2,

bs: Param("Batch size", int)=64,

epochs:Param("Number of epochs", int)=1,

fp16: Param("Use mixed precision training", store_true)=False,

dump: Param("Print model; don't train", int)=0,

runs: Param("Number of times to repeat training", int)=1,

):

"Training of IMDB classifier."

path = rank0_first(untar_data, URLs.IMDB)

dls = TextDataLoaders.from_folder(path,is_lm=True, valid_pct=0.1)

for run in range(runs):

pr(f'Rank[{rank_distrib()}] Run: {run}; epochs: {epochs}; lr: {lr}; bs: {bs}')

learn = rank0_first(language_model_learner, dls, AWD_LSTM, drop_mult=0.5, metrics=accuracy)

if dump: pr(learn.model); exit()

if fp16: learn = learn.to_fp16()

# Workaround: In PyTorch 1.4, need to set DistributedDataParallel() with

find_unused_parameters=True,

# to avoid a crash that only happens in distributed mode of text_classifier_learner.fine_tune()

if num_distrib() > 1 and torch.__version__.startswith("1.4"): DistributedTrainer.fup = True

with learn.distrib_ctx(): # distributed traing requires "-m fastai.launch"

learn.fit_one_cycle(epochs, or)

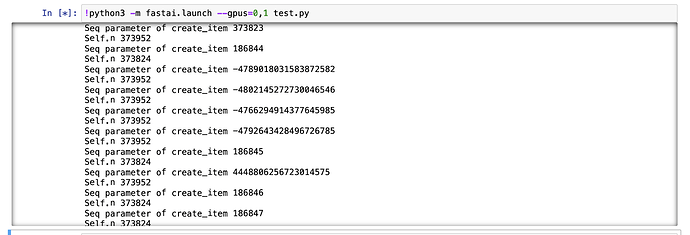

I have printed out the seq and self.n in the below screenshot, I’m not sure why suddenly seq goes to high positive and negative values. ( -4789018031583872582. and 4448806256723014575).

And if I reduce the seq_len=36, I get the below error:

RuntimeError: stack expects each tensor to be equal size, but got [36] at entry 0 and [21] at entry 32

Please let me know, if anyone has resolved this, I have seen some posts on these topics but seems it is unresolved.

Thanks!