Hi everyone,

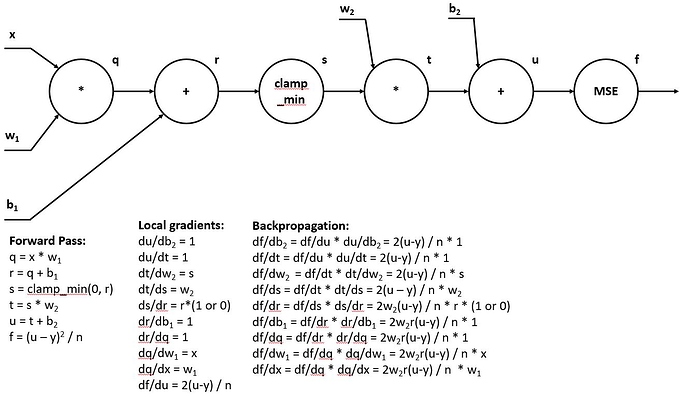

I had troubles understanding the math of backpropagation, thus I decided to get more information on the subject. I stumbled upon this lesson the CS231n Winter 2016: Lecture 4 video. In this lesson, they made Computer Graphs that they used to calculate the forward and backward pass by hand. To see if I understood the material I implemented this for the model used in Lesson 8 (Linear–>Relu–>Linear–>MSE). However, I am not sure if I did it right. So my question is: can someone check this for me? Especially, the ReLU part was somewhat confusing.

Here is the assignment:

Thanks a lot for your time!

Mees Molenaar