stas

March 12, 2019, 4:54pm

41

Thank you for running the update, @balnazzar .

Yes, this is a known caveat, please see: https://github.com/stas00/ipyexperiments/blob/master/docs/cell_logger.md#peak-memory-monitor-thread-is-not-reliable . Please vote for pytorch implementing the needed support. https://github.com/pytorch/pytorch/issues/16266

It probably has to do with Tesla being a faster card than GTX, so the monitoring thread can’t keep up with it, due to python thread implementation limitations.

Currently pytorch-1.0.1 added a single counter, so perhaps I’ll just try to use it for celllogger, instead of the python monitor thread. But we can’t use it concurrently.

1 Like

balnazzar

March 12, 2019, 5:17pm

42

Thanks! I’ll do what you suggest.

stas

March 12, 2019, 6:26pm

43

OK, I implemented locking and 0.1.16 has been released. Please let me know if the crashing still occurs (or any deadlocks).

Fixes negative peak memory reports too.

1 Like

Hi @stas

I have two question in this regard.

1-In the output of IPyExperimentsPytorch, CPU Ram refersto the whole system RAM or to the CPU Cache?

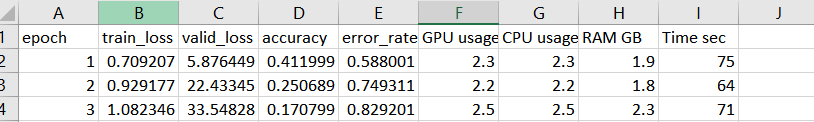

2- how can I get the CPU, RAM, GPU usage and Time taken for training per epoch in my log file? Ideally I would like to have a CSV log file like this :

stas

March 13, 2019, 12:50am

45

I will let the code answer in the best way:

def cpu_ram_total(self): return psutil.virtual_memory().total

def cpu_ram_avail(self): return psutil.virtual_memory().available

def cpu_ram_used(self): return process.memory_info().rss

now you can look them up and see what they all mean.

2- how can I get the CPU, RAM, GPU usage and Time taken for training per epoch in my log file? Ideally I would like to have a CSV log file like this :

It’s already done by:https://docs.fast.ai/callbacks.mem.html#PeakMemMetric

2 Likes

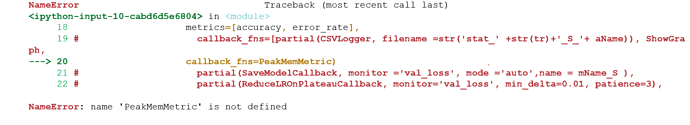

using PeakMemMetric callback I am getting this error:

I have imported callback as :

msandroid

March 13, 2019, 7:48am

47

use from fastai.callbacks.mem import PeakMemMetric

if you take a look into the __init__.pyof the callbacks folder you will see that mem is not imported by default.

1 Like

stas

March 13, 2019, 4:39pm

48

1 Like

thank you @msandroid and @stas

1 Like

stas

March 15, 2019, 3:55am

50

A post was merged into an existing topic: Developer chat

stas

March 18, 2019, 4:20am

51

A post was split to a new topic: PeakMemMetric

spock

February 19, 2020, 8:21pm

52

@stas thanks for this. the docs on Github are well-written.

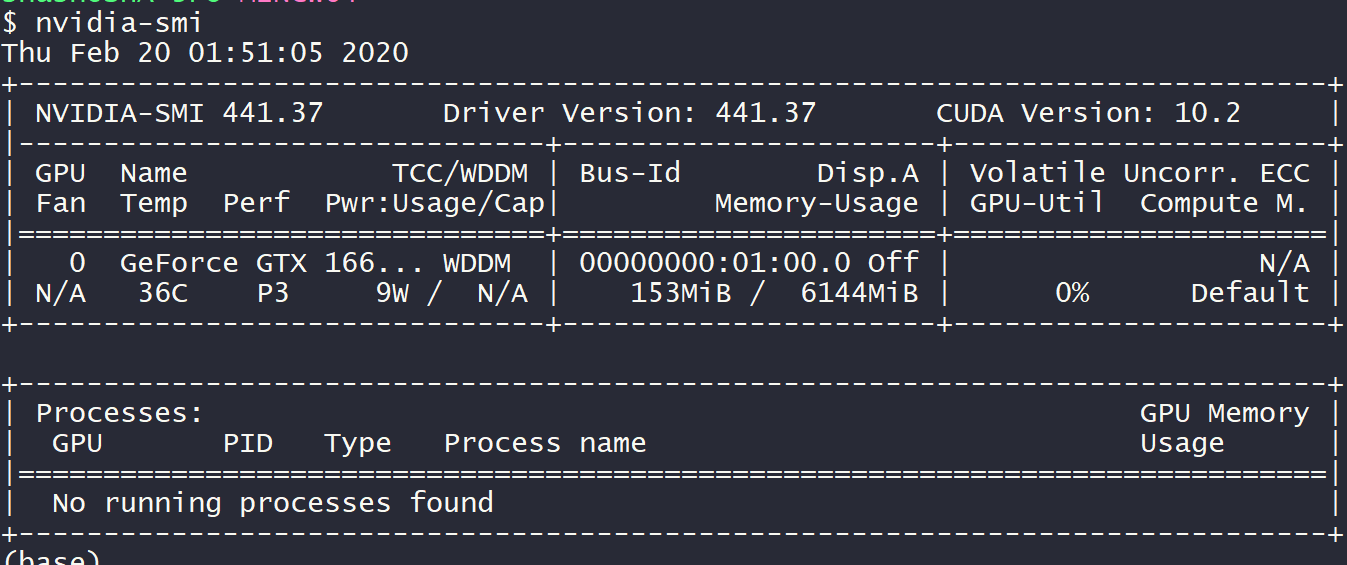

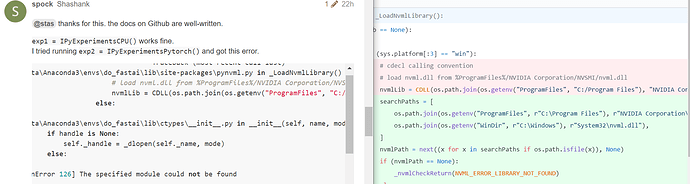

exp1 = IPyExperimentsCPU() works fine.exp2 = IPyExperimentsPytorch() and got this error.

OSError Traceback (most recent call last)

C:\ProgramData\Anaconda3\envs\do_fastai\lib\site-packages\pynvml.py in _LoadNvmlLibrary()

640 # load nvml.dll from %ProgramFiles%/NVIDIA Corporation/NVSMI/nvml.dll

--> 641 nvmlLib = CDLL(os.path.join(os.getenv("ProgramFiles", "C:/Program Files"), "NVIDIA Corporation/NVSMI/nvml.dll"))

642 else:

C:\ProgramData\Anaconda3\envs\do_fastai\lib\ctypes\__init__.py in __init__(self, name, mode, handle, use_errno, use_last_error)

363 if handle is None:

--> 364 self._handle = _dlopen(self._name, mode)

365 else:

OSError: [WinError 126] The specified module could not be found

During handling of the above exception, another exception occurred:

NVMLError_LibraryNotFound Traceback (most recent call last)

<ipython-input-2-1253310f286d> in <module>

----> 1 exp2 = IPyExperimentsPytorch()

C:\ProgramData\Anaconda3\envs\do_fastai\lib\site-packages\ipyexperiments\ipyexperiments.py in __init__(self, **kwargs)

382 if self.__class__.__name__ == 'IPyExperimentsPytorch':

383 logger.debug("Starting IPyExperimentsPytorch")

--> 384 self.backend_init()

385 self.start()

386

C:\ProgramData\Anaconda3\envs\do_fastai\lib\site-packages\ipyexperiments\ipyexperiments.py in backend_init(self)

386

387 def backend_init(self):

--> 388 super().backend_init()

389

390 print("\n*** Experiment started with the Pytorch backend")

C:\ProgramData\Anaconda3\envs\do_fastai\lib\site-packages\ipyexperiments\ipyexperiments.py in backend_init(self)

355 from ipyexperiments.utils.pynvml_gate import load_pynvml_env

356

--> 357 self.pynvml = load_pynvml_env()

358

359 #def start(self):

C:\ProgramData\Anaconda3\envs\do_fastai\lib\site-packages\ipyexperiments\utils\pynvml_gate.py in load_pynvml_env()

15 raise Exception(f"{e}\npynvx is required; pip install pynvx")

16

---> 17 pynvml.nvmlInit()

18 return pynvml

C:\ProgramData\Anaconda3\envs\do_fastai\lib\site-packages\pynvml.py in nvmlInit()

606 ## C function wrappers ##

607 def nvmlInit():

--> 608 _LoadNvmlLibrary()

609

610 #

C:\ProgramData\Anaconda3\envs\do_fastai\lib\site-packages\pynvml.py in _LoadNvmlLibrary()

644 nvmlLib = CDLL("libnvidia-ml.so.1")

645 except OSError as ose:

--> 646 _nvmlCheckReturn(NVML_ERROR_LIBRARY_NOT_FOUND)

647 if (nvmlLib == None):

648 _nvmlCheckReturn(NVML_ERROR_LIBRARY_NOT_FOUND)

C:\ProgramData\Anaconda3\envs\do_fastai\lib\site-packages\pynvml.py in _nvmlCheckReturn(ret)

308 def _nvmlCheckReturn(ret):

309 if (ret != NVML_SUCCESS):

--> 310 raise NVMLError(ret)

311 return ret

312

NVMLError_LibraryNotFound: NVML Shared Library Not Found

running it in a conda environment locally.

I am trying to figure out a solution myself, but in the meantime if anybody can help, it would be great!

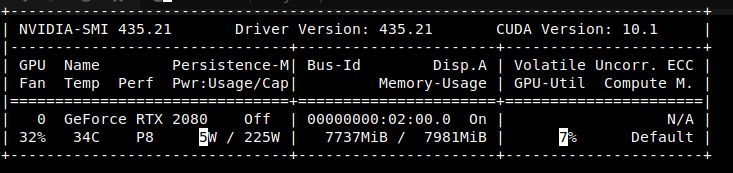

stas

February 19, 2020, 8:53pm

53

ipyexperiments uses nvidia-ml-py3 for tracking memory usage on nvidia cards, and that’s what fails to load.

You need to debug nvidia-ml-py3 on its own. Once you get it working IPyExperimentsPytorch will work too.

For help please refer to https://github.com/nicolargo/nvidia-ml-py3 - my guess is that it can’t find the location of your nvml.dll library - I don’t know windows to tell you why, but by debugging that python module directly you will sort it out quickly.

Hint, you just need to get this working:

from pynvml import *

nvmlInit()

and manually find the location of that dll and see why it is not found at run time.

1 Like

spock

February 20, 2020, 7:21am

54

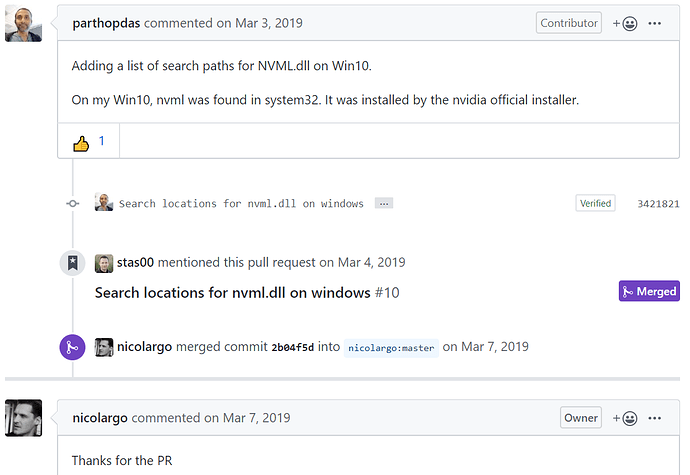

Thanks. Yeah the nvml.dll location was the problem. I just copied it from C:\Windows\System32 to C:\Program Files\NVIDIA Corporation\NVSMI.

stas

February 20, 2020, 3:25pm

55

Glad to hear you sorted it out, @spock .

I just copied it from C:\Windows\System32 to C:\Program Files\NVIDIA Corporation\NVSMI .

Or alternatively If you’d like to contribute to the project submit a PR to https://github.com/nicolargo/nvidia-ml-py3 that adds C:\Windows\System32 to the search path.

spock

February 20, 2020, 6:13pm

56

I just checked, it’s already done (in March)!

nicolargo:master ← parthopdas:master

opened 03:45AM - 03 Mar 19 UTC

And the one who did the commit is someone from fastai community too, I remember referring to his fastai repo yesterday. Also, you mentioned the PR too.

Update :

So, I was wondering why I had the old code, since I made this conda environment 2 days back. Turns out even though that PR was merged in March(that was the last commit to that repo!), that version hasn’t been pushed to pypi. Someone opened an issue for it, here’s the story:

opened 01:00AM - 19 Jul 19 UTC

First up, thanks for this extremely useful Lib.

I was wondering if it would be possible to push the latest version with...

1 Like

stas

February 20, 2020, 6:28pm

57

Yes, https://pypi.org/project/nvidia-ml-py3/ was last released in 2017.

I’d say, commenting and voting on that github issue may help precipitate a new release.

Meanwhile I will update ipyexperiments README to use:

pip install git+https://github.com/nicolargo/nvidia-ml-py3

1 Like

tyoc213

June 1, 2020, 7:32pm

59

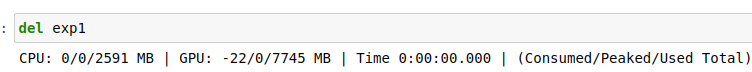

I used

from ipyexperiments import IPyExperimentsPytorch

exp1 = IPyExperimentsPytorch(exp_enable=False, cl_compact=True)

and specifically

but doing del exp1 did not free memory?

Im using fastai2.

stas

June 1, 2020, 11:37pm

60

Please send a short reproducible example and then I will be able to debug your case. What you sent now only makes sense to you, as you know what else is going on (i.e. code, etc.)