Hi guys,

I want to implement a way to interpret why my text classification model made a prediction. The problem is I don’t really know where to start looking.

Anyone knows a good starting tutorial to intepretation methods for text classification?

Hi guys,

I want to implement a way to interpret why my text classification model made a prediction. The problem is I don’t really know where to start looking.

Anyone knows a good starting tutorial to intepretation methods for text classification?

The older version of fastai had interpretation functionality:

You can check the source code for inspiration?

Additionally, in the current version of fastai, you can use the Captum callback:

Captum provides an easy way to perform model intepretability in PyTorch, so this might suit your needs as well.

This is also in fastinference ![]()

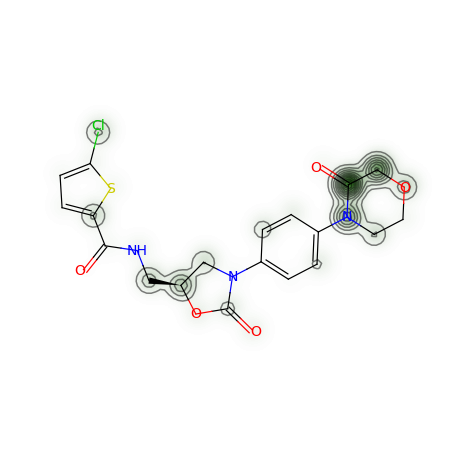

I end up implementing a GradCam equivalent (calling it LSTMGradCam) to interpret the predictions of my ULMFit model. The classifier outputs the probability of bioactivity of a molecule. Here’s what LSTMGradCam looks like for a molecule I used as control:

The green contour (average of the activations) match with the experimental evidence for this kind of molecule, with a chloro-thiophene (the 5-membered ring on the far-left) and the morpholinone (6-membered ring on the far-right) being essential for bioactivity.

Hi @muellerzr - I have been trying to use fastinference with the newest fast.ai for ULMFiT model I trained. I installed and try to follow the tutorial on the fastinference site, but when I try to run the fastinference versions of get_preds() and show_intrinsic_attention() functions, they aren’t recognized. Any tips?

Thanks in advance.

Need a reproducer… are you importing the fastinference.inference import * library?

Thanks for responding so quickly. Didn’t know about from “fastinference.inference import *”. Had been using this: “import fastinference”

Now that I’ve got the library going, I have another challenge. This is what happens when I try intrinsic attention:

learn.intrinsic_attention(‘this is a test’)

TypeError Traceback (most recent call last)

in

----> 1 learn.intrinsic_attention(‘this is a test’)~\anaconda3\lib\site-packages\fastinference\inference\text.py in intrinsic_attention(x, text, class_id, **kwargs)

165 “Shows theintrinsic attention fortext, optionalclass_id`”

166 if isinstance(x, LMLearner): raise Exception(“Language models are not supported”)

→ 167 text, attn = _intrinsic_attention(x, text, class_id)

168 return _show_piece_attn(text.split(), to_np(attn), **kwargs)~\anaconda3\lib\site-packages\fastinference\inference\text.py in intrinsic_attention(learn, text, class_id)

149 dl = learn.dls.test_dl([text])

150 batch = next(iter(dl))[0]

→ 151 emb = learn.model[0].module.encoder(batch).detach().requires_grad(True)

152 lstm = learn.model[0].module(emb, True)

153 learn.model.eval()TypeError: requires_grad_() takes 1 positional argument but 2 were given

Any ideas for how to solve this?

You should use the git version of fastai for now. Once the next release is out that will be fixed. IE:

pip install git+https://github.com/fastai/fastai

pip install git+https://github.com/fastai/fastcore

tried that. same error. could it relate to the fact that the model was trained and exported with fast.ai 2.2.1?

I have been continuing to work on this and may have found the problem:

There is this line in the intrinsic_attention() method that throws the error:

emb = learn.model[0].module.encoder(batch).detach().requires_grad(True)

if I change to:

emb = learn.model[0].module.encoder(batch).detach().requires_grad_()

it works.

Hope this is helpful. Using most up to date fast.ai and fastinference on Colab