Hi Everyone,

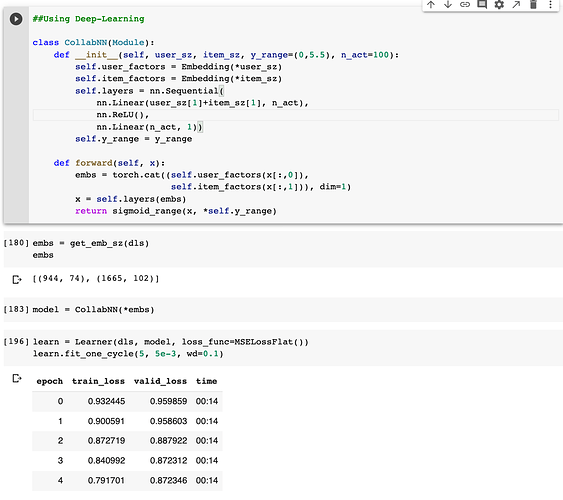

I trying to understand the initializations and parameter updates for tabular model using NN. Image below is from the chapter Collab_filter in 2020 fastbook. When we are using the NN I see that we are using standard linear layers from torch. In this model are the weights still initialized for the model (not the embeddings). I am having a hard time understanding the calculation behind the scene.

- Is it just the embeddings that are used during calculation like typical collaborative filtering models?

- Or do the weights and bias get initialized for the linear layers as well? This would result in two sets of random parameters (embeddings and parameters for the linear layers)

- If it is the second option then do both the embeddings and the weights and biases of the linear model get updated?

- I tried to investigate the actual numbers: the embeddings seem to change before and after training. I was unable to track down the parameters for the linear layers.

- I appreciate your assistance.

— If this all sounds confusing: I am just trying to understand the calculation and parameter update of the forward method while using NN in collab_filtering.

Thank you very much for your help!