I’m looking for clever ways to setup an ImagePoints task (similar to lesson3-head-pose: predict the center of the head from the image) for my own custom application. My application is to predict the inside corners of a hollow square obstacle gate.

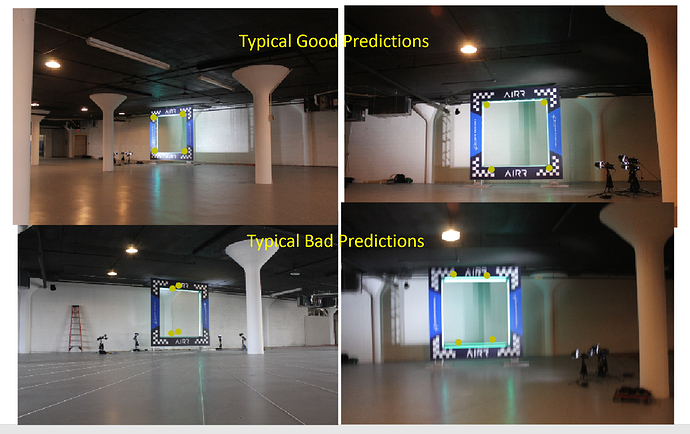

The model trains well for ~2/3 of the images but has large misses for the other 1/3 of images - without being apparent to a human what makes that fail-image so challenging. It is usually the x-coord which is significantly wrong, and y is almost always correct. See below:

- My first thought was that loss for a point should be the cartesian distance (xy-distance^2 = (x-loss)^2 + (y-loss)^2. But since I’m already using MSE for each x and y, my loss is equivalent to cartesian anyways. Can anyone confirm this?

- Second thought: maybe the prediction is bi-modal over the entire x-dimension (e.g. x=200 is the max prediction value but x=700 is the second highest prediction value, while x=500 is less likely then either) So this task might be handled as a classification on the set of intervals over x, and we could predict top-3 x-value regions for each image.

- Is there some way to help the network notice the semantic relationship between the points? Since I’m always looking for a polygon, should I be doing region-segmentation like lesson-3-camvid?

Thank you for any guidance, I’m looking especially for fastai-lessons, library-techniques, or fastai-student-projects that attempt similar tasks.

For reference here’s the datablock-code, and the training outputs and visual evaluations

Give me a few days to look over your notes and compare and I’ll get back with you as finals are here at my Uni

Give me a few days to look over your notes and compare and I’ll get back with you as finals are here at my Uni