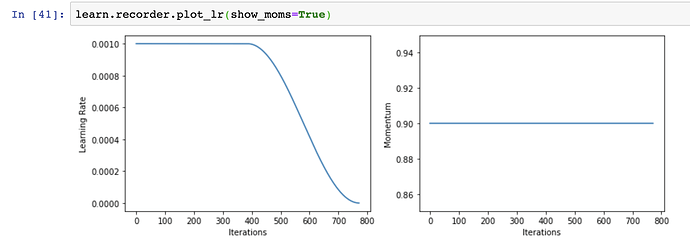

I propose to reconsider using OneCycle. RAdam and Novograd - both don’t need warmup, as opposite to Adam. We can utilise this property and introduce different learning rate policy.

I’ve used flat LR and then cosine annealing.

I did simple script to run training 20 times 5 epoch and calculate mean and std. So far looks good.

updated.

https://github.com/mgrankin/over9000

Imagenette 128 scored 0.8746 on 20 runs with Over9000. That is 1.69% bigger than LB. Imagewoof did +2.89%.