Hopefully I won’t bore everyone with a wall of text, but I’ll make a list of issues I’ve identified, and then I’ll try to answer the OP’s questions.

A) It appears that some of the scores/accuracies for the baseline have been understated.

When rerunning baselines a few months ago I obtained better results:

E.g.:

Imagewoof/128/5 epochs: 55.2% vs 62.3% (12 runs)

Imagenette/128/5 epochs: 84.6% vs 85.5% (10 runs)

This is why I suggest rerunning baselines when running comparative experiments.

I believe the difference comes from Jeremy running those with multiple GPUs, while I was running things on 1 GPU. The learning rate gets adjusted when using 4 GPUs but might not be the optimal one?

B) variance

There is a lot of variance from run to run, especially when running only 5 epochs. Therefore we need to run things multiple times, both for baseline and test models.

C) train time

There should be a way to take into account training time.

I have the top accuracy for Imagewoof/256px/5 epochs. And I could have beaten more, but didn’t insert them.

The reason is that my model took 30-50% more time to run. Therefore, it’s a bit unfair to say my model was better.

So I submit that epochs is not a perfect constraint. However, runtime is not perfect either, because it just means you can run more and better GPUs and get on the leaderboard. That’s an important thing to explore, but I think we’d want to prioritize creating better models/training methods.

To answer the issues in the OP:

1 - Is there a number of times we should run (i.e. 5 times, 10 times or ?) to prove a new high score…

I believe the sample size depends on how much better your model is. It’s a lot easier to prove a 1% increase than a 0.1% increase.

I have a suggestion (and maybe people with more stats knowledge can tell us if it is a bad idea):

Have baselines in the leaderboard include mean, standard deviation, and sample size. Then people with new ideas can test against the baseline, and perform a t-test to compare their mean accuracy against the baseline. All it seems to take is plug in the values into a calculator such as this: MedCalc's Comparison of means calculator

If you get a better results with p<0.05, you have a better model.

We could provide baseline data with 10-20 runs to start with and see if that is enough as we go.

2 - Is the new score the average of all X runs above (or the top X of Y runs)…

I’d say it is the average of all runs, and also includes standard deviation and sample size. Personally, I’ve been using the max accuracy for each run (e.g. if you run 80 epochs, epoch 75 might have the best result).

The leaderboards currently show that many of the scores were run on 4 GPU’s. Since virtually no one has 4 GPU setups, is that even an issue of note or is that just merely trivial details.

If the baseline does better on 1 GPU, we should make sure that this is the result we use, so as not to be unfair.

I believe many people have access to 4 GPUs (e.g. on Salamander or vast.ai), and it might be a pain to run 100+ epochs on 1 GPU.

Maybe we should have a different line on the leaderboard depending on # of GPUs.

Whatever we decide to do, we just have to remember our objective to identify improvements on models and how we train models, and not just beat the leaderboard.

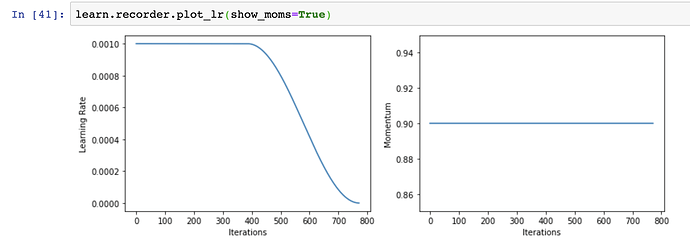

4 - The learning rate is also noted on the leaderboards - but is that a requirement to run under that learning rate or is it there to allow you to replicate the current high score?

I think all the parameters should be there to replicate the current high score, and not a requirement for future tests. However, we should make sure that those parameters are the best for the current baseline models. Otherwise, your improved model/method, might not be better and your new high score might be an artefact of having picked a better lr.

In your example, you picked a higher lr for RAdam and beat Adam. But did you test that higher lr with Adam?

I think we will always find flaws in the leaderboard because new situations will come up. What we have to make sure is that we design our experiments in a way that demonstrates properly that our new ideas are better. If we act that way, then we can improve how the leaderboard works as we go.

Suggestions:

We could rerun some of the baselines, on 1 GPU, making sure the learning rate is decent, over 10-20 runs (the fewer the epochs the bigger the sample size).

Possibly, when someone comes up with a better results, we might want to keep the original baseline results just in case the new model is very new/unfamiliar and people still want to compare against a generic xresnet50.