Hey everyone! I’m Pranav, a junior studying CSE at BMSCE, Bengaluru, India.

- I developed neograd, a deep learning framework created from scratch using Python and NumPy with PyTorch like API

- It has autograd (automatic differentiation), which supports scalars, vectors and matrices and calculates all their gradients for you automatically during the backward pass. It is also fully NumPy broadcasting compatible.

- Layers like Linear, 2D, 3D Convolutions and MaxPooling, activations like ReLU, Sigmoid, Softmax, Tanh layers all built from the ground up.

- It can also save and load models, parameters to and from disk.

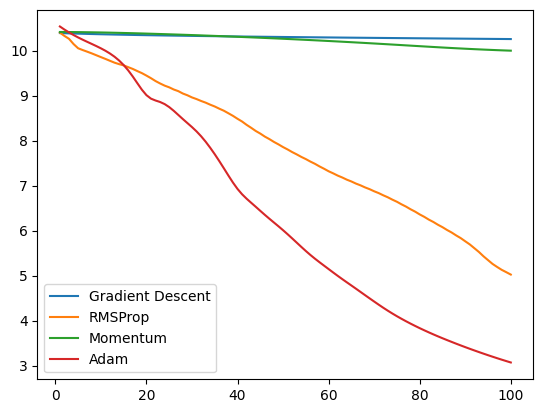

- Many popular optimization algorithms like Adam, RMSProp, Momentum are included

I initially built this to understand how automatic differentiation works under the hood in PyTorch, but later on extended it to a complete framework. This was developed as an educational tool in order for beginners to understand how things work under the hood in giant frameworks like PyTorch, with code that is intuitive and easily readable. I just released v0.0.3 today.

I’m looking for feedback on what more features I can add and what can be improved.

Please checkout the github repo at https://github.com/pranftw/neograd

Colab Notebooks to get started

- https://colab.research.google.com/drive/1D4JgBwKgnNQ8Q5DpninB6rdFUidRbjwM?usp=sharing

- https://colab.research.google.com/drive/184916aB5alIyM_xCa0qWnZAL35fDa43L?usp=sharing

Thanks!