Hi guys!

To give some context, I have been experimenting with fashion images using the deepfashion dataset and I was interested in learning about classification using CNNs, so I built a resnet-50 classifier and it was performing okayish.

So, I started looking into what could use in order to understand where is the model getting confused the most and as mentioned in this thread, I used Class Activation Maps to visualise the results of my prediction but I cannot seem to find much help with this.

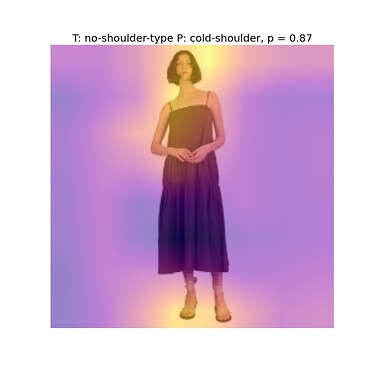

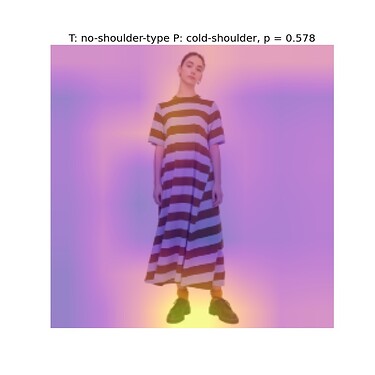

For eg. I am trying to look at whether a dress has an off-shoulder, a cold-shoulder, a no-shoulder-type or a one-shoulder type. I built a single-label 4 class classifier and used the CAM technique to overlay the map over those images where the model was primarily making mistakes.

Now, since the shoulder type is what I am trying to predict I could see that the neck-shoulder region lights up in the images but along with it, many other regions light up as shown below. And sometimes, the model predicts the wrong attribute with a very high confidence i.e. probability corresponding to predicted label is more than 80%.

In the above figures, T corresponds to the True Label and P corresponds to the predicted label. The training data is balanced with almost 800 images per class i.e. 3200 images in total trained using Adam optimizer for 21 epochs with a final validation accuracy of close to 85%. However when investigating the images where model is incorrectly predicting the tags, I am yet not able to figure it out. Also, in what other ways could CAM be used for interpreting the obtained results?

Any help would be much appreciated.

Thanks!