Hi there,

I am working on productionizing a computer vision model that was trained using FastAI. I was sent a saved model file which I am able to successfully load on my machine using load_learner('filename'). I was able to test the model by predicting on a few images with learner.predict('my_image_filename.jpg'), and found that the predictions matched what I expected.

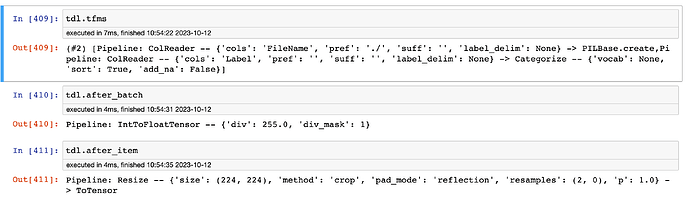

In our production environment, I will need to perform model inference on a single image, but it is a requirement that the inference uses a pure PyTorch forward pass (the system would require significant rearchitecting to accommodate a fastai Learner.predict inference).

I need to understand how Learner.predict loads and processes a single image so that I can replicate this in pure PyTorch. Is there any way to extract that portion of the code so that I can run it to load/process/transform my images into a tensor that can then be sent through the forward method of learner.model? I’ve been reading through the docs and forums to try and find this, but I haven’t been able to find where that specific processing code exists, though it must exist since it has to be executed for the predict function to work.

I have tried to reverse engineer the methods reading the docs and the forums and re-create them in pure PyTorch, and I’ve got somewhat close to results that match Learner.predict, but still significantly off such that the model performance will be greatly degraded if I use it in production.

Can anyone point me to any attributes/methods that might help uncover what the predict method is doing to load/process images?

For some more specific context, the dataloader that was used to train the model was defined as

dls = ImageDataLoaders.from_df(train_df_f,

valid_col='is_valid',

seed=365,

fn_col='FileName',

label_col='Label',

y_block=CategoryBlock,

bs=BATCH_SIZE,

item_tfms=Resize(224)

)

I’ve attempted to recreate this in pure PyTorch using several different methods, and here is the one that got the most similar output to Learner.predict, despite still being significantly different on many test images:

from PIL import Image

# Load image

input_image = Image.open("my_image.jpg").convert("RGB")

# Convert to torch.Tensor values in [0, 255]

converter = PILToTensor()

tensor_image = converter(img)

# Apply necessary transforms

tensor_image = (tensor_image.float() / 255.0)

resize = T.Resize(224)

normalize = T.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

inputs = normalize(resize(tensor_image)).unsqueeze(0)

model = learner.model

model.eval()

with torch.no_grad():

predictions = model(inputs)

predicted_probabilities = predictions.softmax(1)

Using this PyTorch code gives directionally correct predictions, but with much less confidence than the fastai predict method.

Please let me know any pointers, really hoping to find a way to get to parity without rewriting my ML inference library to accommodate fastai for this single use case.