I’ve read the inference tutorial, and I can load a trained learner and do a single prediction with .predict() but I don’t see how to easily do batch inference. I can construct a batch tensor and run it directly through learner.model(data) but it seem like there’s probably a smarter way to do that. Can anyone point me in the right direction?

2 Likes

Try .pred_batch(), it’s what .predict() is actually calling behind the scenes (with a batch containing a single item).

2 Likes

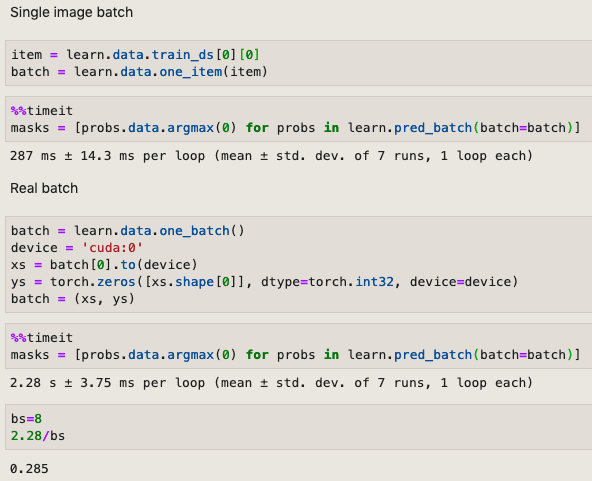

@yeldarb Hi, I seem to be getting the same average inference speed using a loop of predict versus a single pred_batch. Is that normal? I thought batch prediction would be faster.

2 Likes

Same experience here. No speed differences.

Predict internally makes your image as “a batch of size one”. Hence same average inference speed.

1 Like