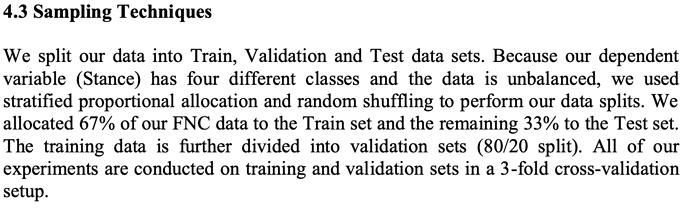

The picture above is what I’m trying to replicate. I just don’t know if I’m going about it the right way. I’m working with the FakeNewsChallenge dataset and its extremely unbalanced, and I’m trying to replicate and improve on a method used in a paper.

Agree - 7.36%

Disagree - 1.68%

Discuss - 17.82%

Unrelated - 73.13%

I’m splitting the data in this way:

(split dataset into 67/33 split)

- train 67%, test 33%

(split training further 80/20 for validation)

- training 80%, validation 20%

(Then split training and validation using 3 fold cross-validation set)

As an aside, getting that 1.68% of disagree and agree has been extremely difficult.

This is where I’m having an issue as it’s not making total sense to me. Is the validation set created in the 80/20 split being stratified as well in the 5fold?

Here is where I am at currently:

Split data into 67% Training Set and 33% Test Set:

x_train1, x_test, y_train1, y_test = train_test_split(x, y, test_size=0.33)

x_train2, x_val, y_train2, y_val = train_test_split(x_train1, y_train1, test_size=0.20)

skf = StratifiedKFold(n_splits=3, shuffle = True)

skf.getn_splits(x_train2, y_train2)

for train_index, test_index in skf.split(x_train2, y_train2):

x_train_cros, x_test_cros = x_train2[train_index], x_train2[test_index]

y_train_cros, y_test_cros = y_train2[train_index], y_train[test_index]

Would I run skf again for the validation set as well? Where are the test sets from skf created being used in the sequential model?

Citation for the method I’m using:

Thota, Aswini; Tilak, Priyanka; Ahluwalia, Simrat; and Lohia, Nibrat (2018) “Fake News Detection: A Deep Learning Approach,” SMU Data Science.