I’m using detectron2 for object detection and solving this zindi challenge whereby I’m tasked to detect and get the bounding boxes of the face of sea turtle

I would like to know how to normalize the bounding boxes when creating the submission csv file. I’m able to create a decent model that draws the bounding boxes around the face of the turtle, but when I submit it to zindi, I get a low score.

And the reason for that is because most likely, I’m not normalizing the bbox coordinates properly.

For now, I’m using the below code to normalize my bbox for submission;

new_lst = [val[0]/img_w, val[1]/img_h, val[2]/img_w, val[3]/img_h]

x, y, w, h = new_lst[0], new_lst[1], new_lst[2], new_lst[3]

whereby;

val = list of actual bounding boxes for example, val = [207.4368, 185.5899, 368.4472, 312.9465]

img_w and img_h is the width & height of the image respectively. For example, img_h = 384, img_w = 512

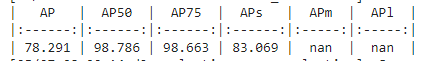

In colab, the model has performed well with the below metrics;

but when I submit my csv file, my zindi leaderboard score is low because of the incorrect submission format. I’m certain the issue is with normalizing the bounding boxes and that’s where I need help.

Here are some of my questions;

-

Am I correctly normalizing the bounding boxes for submission?

-

Is there a better way to normalize the bounding boxes for better score on zindi?

Kindly help me calculate the normalized bounding box. Thanks