Hello everyone,

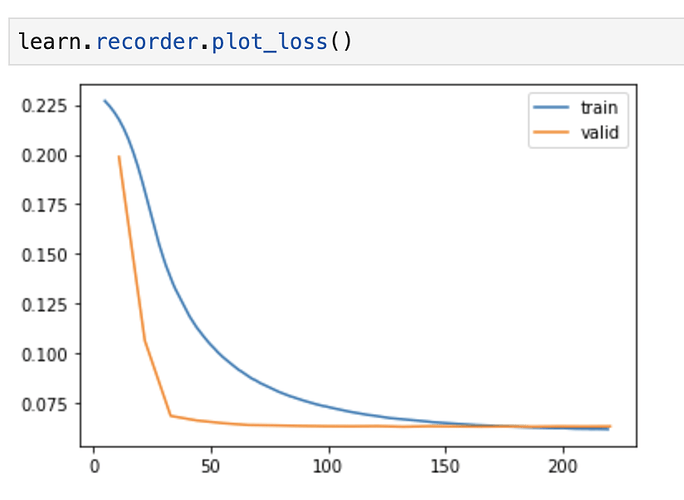

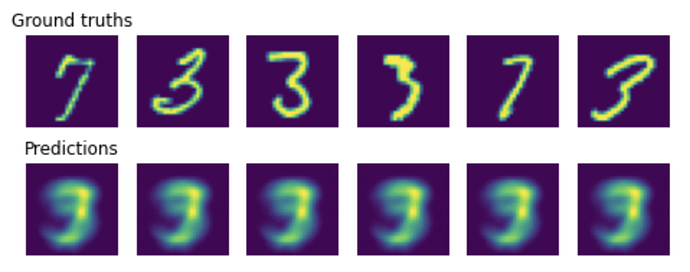

I’ve just tried my hands at writing an autoencoder, following this tutorial. I use the MNIST dataset and the tutorial recommends to build the encoder and decoder as multi-layer perceptrons. However, my autoencoder quickly learns a trivial unique solution that is a roughly and average of letters of the samples (see figs below).

I actually get the same results when trying to run the tutorial itself.

To me, it looks like the decoder is learning to roughly fit the data without using the information from the bottleneck layer. As a result the encoder never learns anything. I tried adding dropouts in the decoder, hoping that it would slow down the decoder learning and allow the encoder to learn better. But it didn’t work.

I know that autoencoders are more often written with a CNN rather than multi-layer perceptron. Is it actually the fundamental problem? Can it be done with an MLP? What could I do to force my encoder to learn?

For reference, here is my implementation using Fastai, and the implementation from the tutorial in vanilla PyTorch.

Thanks,