Hi,

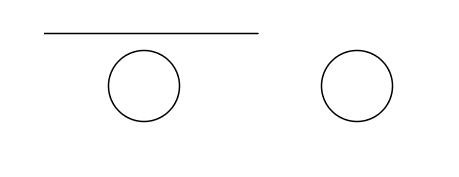

Currently, I am annotating the images for object detection task in which usually the objects that have to be detected are surrounded by specific pattern and in many cases that pattern determines whether the nearby object is of a kind that has to be detected. Below is the simplified example of two circles. The model is supposed to detect the circle on the left and ignore the circle on the right. So my question is whether the model will learn itself to ignore the circles that don’t have any line nearby if the line is not included in the bounding box or will it partially/completely ignore the surrounding context of the objects annotated with bounding boxes?

The image below certainly is an oversimplified example. Another example would be pupil detection in case of drawing bounding boxes around pupil and exclude the rest of the eye; does the model learn to tell apart pupils from black circles considering the fact that pupils are always in the center of the eye.