Playing around with SGD on excel, I find it interesting to share with graph, how learning rate value affect the predication and the Average loss caused by that predication.

So, the higher the learning rate, we will predict faster , but how high can we go??

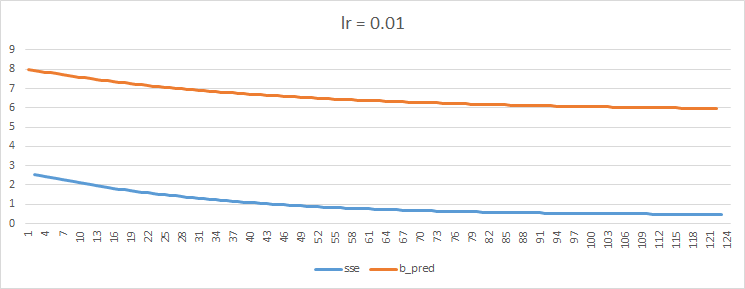

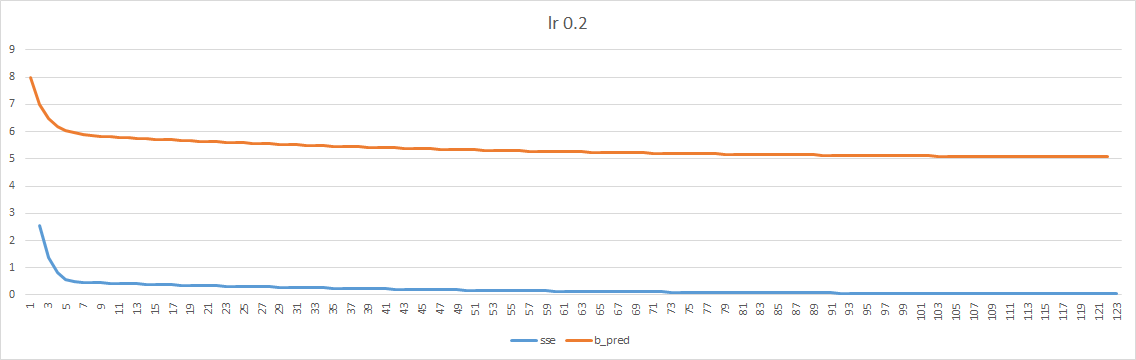

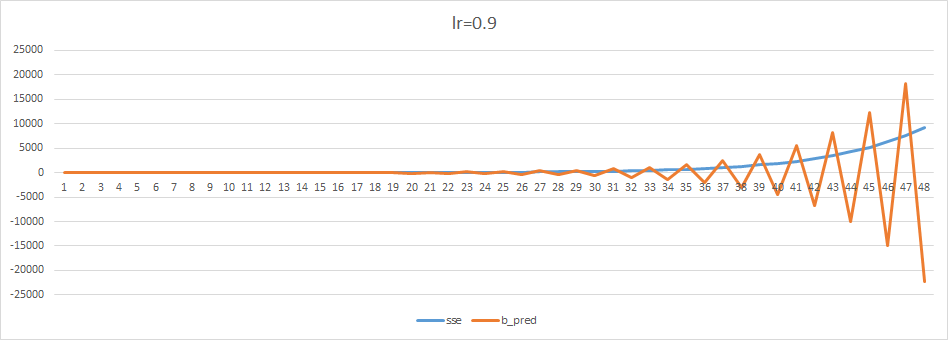

I iterate ~120 times for each learning rate (0.01, 0.2, 0.9) and plotted only the prediction of b for each iteration.

I also added to the graph the average loss that was calculated when using that prediction.

the linear i used is: y=2x+5 (Hench a=2 b=5)

started with b_pred = 8.

lr=0.01:

as you can see, predication is slowly cowling towards the correct b which is 5. it will take about 3 times as more iteration to consolidate to the b_pred=5. Avg loss slowly consolidate to 0.

lr=0.2:

b_pred was already consolidated to the 5 (even fater than 120 iteration), Avg loss consolidated to 0.

lr=0.9:

This is interesting. lr too high will not allow the predication to consolidate, rather, it will shift from too high predication to too low predication. the gaps between prediction increase with each iteration. We also see that the Avg loss is increasing with each iteration.

Hope you will find it helpful for your understanding of learning rate affect prediction.