I have watched first video in part 1 yesterday and working on the Dogs vs Cats . I like to understand Why and How this mean is calculated for the image . The below link shows the code block where it is done.

- How: Sum up the intensities of the training set for each color channel separately and divide by the total number of pixels. You can do that manually in python with x_train.mean(axis=(0,1,2)).

- Why: Once we know the mean, we can subtract it from all pixel values so the intensities are centered at 0. this helps to increase training speed and accuracy.

Thanks @pietz . I believe Mean mentioned in this problem is related to dogs vs cats dataset. So do we need to change it when doing state farm problem ?

Second in the same code block the image is converted fr RGB to BGR . Any particular reason for the same .

mean centering each channels is a little more precise than just taking the mean across all the channels. that said, ive never come across a problem where centering each channel has lead to better results. but when you do transfer learning you want to adapt precisely to the normalization method the architecture was trained on. no questions asked.

concerning BGR, can you check if the code uses any opencv functions after the conversion? if i’m not mistaken opencv natively works with BGR instead of RGB. other than that i dont know.

Thanks @pietz

I verified the code and it is not using openCV . I too read a blog post which explains the same . May be there is a explanation for that in the next videos

Thanks

The people who trained the original network used caffe with opencv, so we have to convert it to BGR a well

“Apparently it’s because Caffe uses OpenCV which uses BGR. We could swap it in the weights, but I think that could confuse people who looked at the Caffe Model Zoo page.”

So in the Case if I use Keras Pre-Built Model from 2.6 , I believe that Pre-Processing is not required . Let me know your thoughts

I tried to calculate this myself too. This is how I did it (i did with the cats dogs redux data, which is obviously not the original vgg16 training set. But I’ve read that when building your own model from scratch, you should do this over your training set (and exclude your validation set).

Anyway, couldn’t find any good code examples, so I thought I’d post this here. Probably a faster way to do this (this should be much more parellisable as only one core on the AWS box was pegged. But it only takes about 3 minutes to run:

batches = gen.flow_from_directory(path + 'train', target_size=(224,224),

class_mode='categorical', shuffle=False, batch_size=128)

sum = np.array([0.0, 0.0, 0.0]);

count = 0

for imgs, labels in batches:

sum += np.sum(imgs, axis=(0, 2, 3))

print '%d/%d - %0.2f%%' % (count, batches.nb_sample, 100.0*count/batches.nb_sample), "\r",

count += imgs.shape[0]

# if we've done one pass we should break out - otherwise infinite loop

if count >= batches.nb_sample:

break

avg = sum/(count*224*224)

print avg

# [ 124.583 116.073 106.3996]

PS: Does anyone know where to get the original vgg16 dataset from?

To answer my own question, it was based on the ImageNet 2014 competition, where you can download the data here

Here’s the data it was trained on I believe:

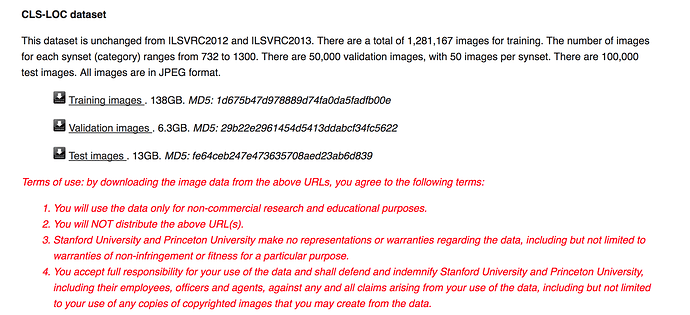

Actually, from Lecture 3, I think it was the 2012 dataset which is here

So I downloaded the VGG dataset and as an exercise tried to calculate the VGG16 mean. I ended up getting [ 122.6778 116.6522 103.9997]

It took 6.5 hours and consisted of 1,281,167 images.

I ran it on the AWS P2.xlarge instance.

The official mean that gets used in the vgg16.py file is [123.68, 116.779, 103.939] which is quite close, and the difference may be down to floating point errors perhaps.

I calculated it on the training set only, so it’s possible that the official number also used the test and validation sets perhaps. Though, I’ve heard it’s generally not a good idea to calculate the mean from the validation or test sets.

Preparing the imagenet data was a bit of a challenge as the training data set was 138G, so I had to resize my EBS volume on my server a few times while I was extracting things.

If anyone’s interested I can share the process I used to download and extract and order the data so that keras can use it.

Hi @eugeneware … I’m interested to know how you structured the data so that keras can use it. Can you please list the procedure here ?

Hi @eugeneware what is batches.nb_sample for ?

Hi @jaybakshi

This is the core code that I used (once I’d downloaded imagenet of course):

path = 'data/imagenet/train/'

gen = image.ImageDataGenerator()

batches = gen.flow_from_directory(path, target_size=(224,224),

class_mode='categorical', shuffle=False, batch_size=128)

sum = np.array([0.0, 0.0, 0.0]);

count = 0

for imgs, labels in batches:

sum += np.sum(imgs, axis=(0, 2, 3))

print '%d/%d - %0.2f%%' % (count, batches.nb_sample, 100.0*count/batches.nb_sample), "\r",

count += imgs.shape[0]

# if we've done one pass we should break out - otherwise infinite loop

if count >= batches.nb_sample:

break

avg = sum/(count*224*224)

print avg

# imagenet full dataset: [123.68, 116.779, 103.939]

# validation set: [ 120.6110427 114.79335459 102.28766471]

# I got: [ 122.6778 116.6522 103.9997]

# 2nd time: [ 122.6778 116.6522 103.9997]

It was using a previous version of Keras, so the parameters might be different.

I used a batch size of 128. Though, use the largest that can fit in your RAM and experiment.

Hope that helps.

Thank you @eugeneware … that helps

FWIW, I downloaded imagenet from this URL

Though you can also download it with a torrent client from:

training set and validation set

You’ll probably need to accept the terms of service though before downloading it.

Can you please explain why axis is (0,2,3) in this line “sum += np.sum(imgs, axis=(0, 2, 3))” ?

It’s been a while since I’ve written this code. But my recollection is that the ImageDataGenerator will (with theano as the backend) return the image data as (samples, channels, height, width) - ie. channels first.

We want to do the sum over the whole batch, and have the total across the channels. Thus the summation will be across the samples (batch size), height and width, which are axes 0, 2 and 3.

It will be different for tensorflow as your backend though, as that is (samples, height, width, channels) - ie. channels last.

But try running the code across a small number of images in a notebook and have a play with summing across different axes to get a better understanding. I must admit, I often just play with the axes until the output makes sense!

Thanks @pietz and btw, do we need to subtract mean from all pixel while testing the model ?