How exactly CNNs take care of Zoomed images when we might have trained on nonzoomed images especially? Do real-life use cases actually do data augmentation by including zoomed in and zoomed out images too? If you have industrial experience on the same please do share.

I don’t have industrial experience here, but I am still giving it a shot.

I guess it all depends on how much zoom is there at inference time and and how much aggressive zooming you are applying at training time.

If the model was trained on exclusively non-zoomed images, and then you feed it with exclusively zoomed imaged in production, then I don’t think this will ever really work.

Hi utsav hope you are having a wonderful day!

I agree with How CNNs take care of Zoomed images? answer. Having helped someone who was trying to represent scale in the classification of a specific item in an industrial process, it soon became apparent that zooming in to close or too far affected the accuracy of the model in some cases the part came in different sizes.

We found there was a window or range distance that you cold zoom in or out, however to help capture scale even remotely accurately we used a ruler in the all the images used in training the model and inference.

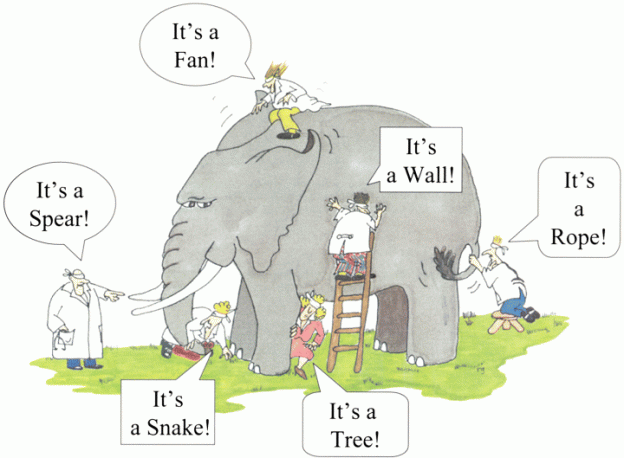

I think this image sums up the zoom issue well depending on how much you want to zoom in or out.

I never rule anything out, there may be some fancy technique out there that can help, please update us if you find one!

Cheers mrfabulous1

Wanted to add that CNNs are very good at being invariant to translation ie there can be changes in the inputs (zoom, rotate, flip) but CNNs can still detect the same class. This is predominantly because of the pooling layer for example with max pooling we are reducing spatial size and calculating max values so any changes in the input still result in predominantly the same max pooling values. This is my understanding of how invariance works and the amount of zoom is also a major factor as already mentioned. Hope that helps.

During training, augmentation is done to take care of the scenario for zoomed images.

This is done as the CNN may not be able to properly predict the right class.

Also the scale of the images is important and that is why in fastaiv2 progressive resizing was taught to take care of this issue.

The FixRes paper (“Fixing the train-test resolution discrepancy”) covers this quite nicely actually. They used different train-test image sizes to achieve the current SOTA on Imagenet using an EfficientNet

I wrote a summary of the paper here

Basically if you are using a transform that does some form of zoom, e.g. RandomResizeCrop, you get better results when your test images are larger. Or else if you can’t/don’t want to resize your test images you can train at a lower size and still get the same performance (and an increase in speed due to being able to use larger batch sizes)

Their reasoning is that while the CNN backbone is scale invariant, the classifier layers in the head cannot handle the mismatch in size between the zoomed in train and the non-zoomed test images. Training at a smaller size (e.g. 192x192) and testing at a higher size (e.g. 224x224) means that the apparent object size in the training images is closer to the test image size. Not sure if I explained that well, have a look at my post or the paper for some images that it explain it more intuitively!