I have a multiclass image classification problem where I have to predict the class and subclass of the Image.

For eg:

Given an Image, I have to predict the class (dog) and the species

Do you have any working example for the same, preferably in keras or cntk.

The VGG16 model trained on Imagenet…

Is it trained in a hierarchical fashion?

If you predict the specie, won’t it imply the animal class?

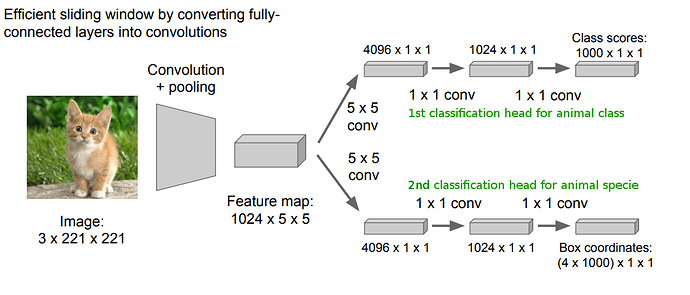

However, if you have to do what you have mentioned (and I am purely guessing here with no prior experience, experts please correct me if wrong), you might need a CNN architecture with two heads, as is used in object detection.

Pulling up a slide from cs231n:

Fig: Please note that the lower head in the image is used to predict box coordinates for object detection, but you will need a classifier just like the top head for your purpose.

The lower layers of the architecture would discover the common features, while the two heads would need to be trained one for the animal class, and one for the specie.

OR to make matters simple, you might choose to have two different classifiers altogether, only differing in their classifiers (the (last) classification head or the last few fully connected layers). Use transfer learning.

–

Interesting problem , I have built something similar with text and the approach I followed is

Predict the top level using a model (In your case it can be animal such as dog , cat etc)

Train a model seperately for the leaf level (species ) , load the leaf level model based on top level model prediction and predict the leaf level class .

It looks like you need to just fine-tune one model to predict breeds. Mapping breeds to class is static and well known, right? So you can take any example say from here https://www.kaggle.com/c/dog-breed-identification/kernels

agree, in fact there might be an advantage to train the same model on different classifiers - i personally have quite a positive experience with such a model. Here is a nice quote from Francoise Chollet Keras book:

Additionally, a remarkable observation that has been made repeatedly in recent years is that training a same model to do several loosely connected tasks at the same time results in a model that is better at each task. For instance, training a same neural machine translation model to cover both English-to-German translation and French-to-Italian translation will result in a model that is better at each language pair. Training an image classification model jointly with an image segmentation model, sharing the same convolutional base, results in a model that is better at both tasks. And so on. This is fairly intuitive: there is always some information overlap between these seemingly disconnected tasks, and the joint model has thus access to a greater amount of information about each individual task than a model trained on that specific task only.

Another approach is that shown in the DeVISE paper which is cover in (IIRC) lesson 9.

Yup that’s what we did in lesson 7!

Thanks @jeremy @ecdrid @anandsaha I will try this and let you know my progress.

I also came across a paper, which helps in this process: HD-CNN

I was also thinking of another approach:

Steps:

- Train a model for Level 1 categories.

- Train several other models for Level 2 categories.

- Use Probabilistic approach to combine the output from Level 1 and Level 2 models and give a prediction.

I know it might take a lot of time for training, but we can use transfer learning to reduce the time. Memory might also be an issue in this case.

Space and Time complexity might arise in this approach.

hi @srmsoumya

first: have you managed to solve it? I’m working on similar problem, just text classification

second: I had 2 ideas

IDEA 1:

- build 1 model for the Level 1 category

- freeze weights and replace the last layer, then learn Level 2 category

IDEA 2:

build a network with number of neurons equal to Level 1 category in last before layer, then add the last layer with number of neurons equal to Level 1 + Level 2 categories, and freeze the connections between Level 1 category on last layer and Level 1 category in last before to value of 1 with linear activation on these neurons on last layer

this might be a bit complicated, but read it carefully and you’ll get it I think

@guyko Have you ever done that? I am dealing with a similar problem and was thinking of something similar to your IDEA 2. Although I was considering something like a ResNet-ish block to have the level 2 predicted from both the encoded features And the predicted level 1 category. I am trying to find a simple enough way of doing this, as I have 4 layers of taxonomy for my classifier…

I have done a custom structure where the higher level prediction is an input to the lower level prediction with a multiplicative function. That way the last layer sums up to 100% too. Basically a weighted output on last layer, where the weights are the higher level.

I needed to write a custom loss for that.

Could you tell me which code you used please?

implementation !

How do you classified the higher level???!