Thanks for the reply!

Your comment and the beginning of the Lesson 4 video clarified these concepts for me. In particular, it is thinking about these filters as 3-dimensional objects (3x3x<# of filters>) instead of merely two dimensional 3x3 matrices.

Here is my understanding:

Layer 1

Receives as input a bunch of 256x256 images with 3 layers (the 3 RGB channels). It is helpful to think of these images as 3 dimensional as well (256x256x3).

The layer is configured to apply 64 3x3 filters to these images, but again it is helpful to think about the 3x3 filters as 3 dimensional (e.g., as 3x3x<#of filters from previous output>). So in reality, the 64 filters are 3x3x3 cubes or like a stack of three 3x3 matrices.

The three layers (matrices) that make up each filter are convoluted with their corresponding layer in the input, and summed to produce a new representation of the image. As there are 64 of these filters, there will be 64 representations.

The number of parameters will be 6433*3 (the last 3 is the # of filters/channels from the input).

Layer 2

Receives as input a bunch of 256x256 images each with 64 layers (or representations). Again, its helpful to think of them as a 3 dimensional object as well (256x256x64).

This layer is configured to apply 32 3x3 filters to these images, but to account for the 64 layers as input, it is better to think of these filters as being 3x3x64.

The 64 layers (matrices) that make up each of these 32 filters are convoluted with their corresponding layer in the input, and summed to produce a new representation of the image. As there are 32 of these filters, there will be 32 representations.

The number of parameters will be 3233*64 (the 64 is the # of filters/channels from the input)

As forward/back prop happens over and over, the 64 3x3(x3) filters in Layer 1 and the 32 3x3(x64) filters are trained and begin to take on different characteristics based on helping the model predict the correct classes.

…

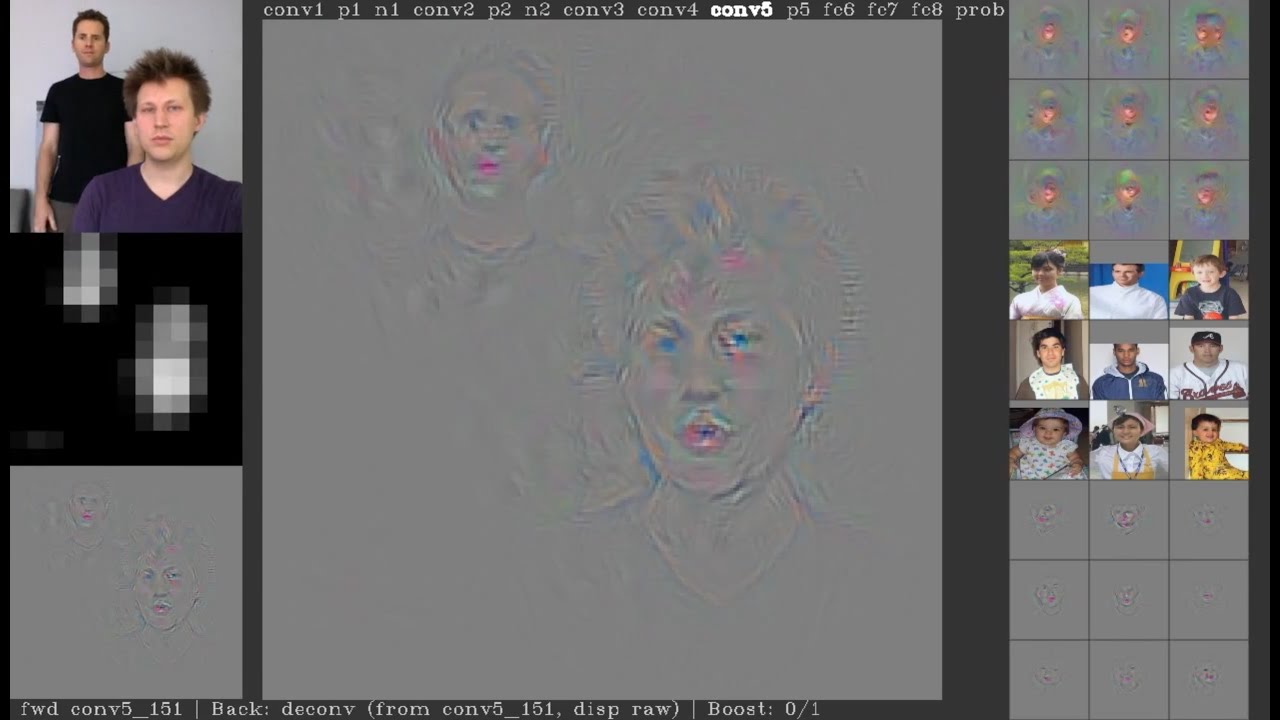

ANOTHER QUESTION: How do the later convolutional filters “find even more complex structures of the image” (from lesson 3 notes)? It would seem that with the images getting downsized with all the max-pooling that what you get from these filters would be less specific and less complex. I know this isn’t the case, but I don’t know why that is. For example, at the end of VGG we have 7x7 images with 512 channels (representations) that can find very specific things … how is that given the images are on 7x7 pixels?

Thanks