Hi everyone, how are you doing?

I’m currently going through the FastAI course (currently at Lesson 7). I have questions regarding presizing (lesson 5) and test time augmentation (lesson 7)

Presizing:

item_tfms=Resize(460),

batch_tfms=aug_transforms(size=224, min_scale=0.75)

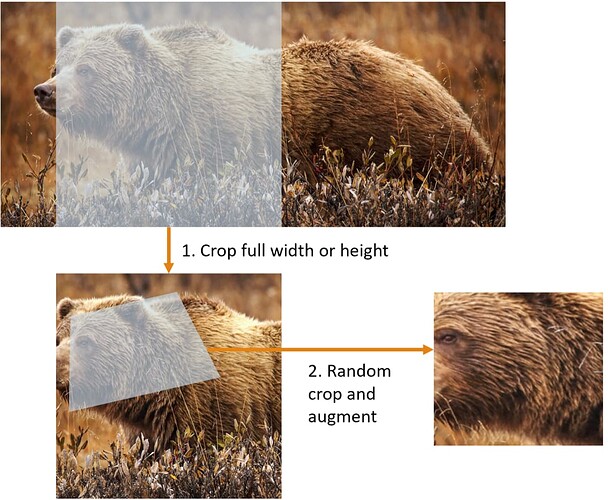

Here’s my shaky understanding of it. “item_tfms” bring all images to a larger size than the required one. Let’s say the required input size is 224, “item_tfms” would make it to 460 first. This is done by either expanding smaller (less than 224) image to 460 or crop full height/width to 460 of larger image ?

And then after that, data augmentation is employed all at once (randomly cropped at 224 and then rotate/blur/etc) ?

Test Time Augmentation

My understanding of it is that you check the model’s performance with different versions of 1 image/test (different randomly cropped versions) to see if the model actually learn/understand the data rather than simply “memorizing” it. Is that correct?

And to follow that, I assume this method, test time augmentation, would give us a better assessment of the model? My understanding is that it is similar to giving a test with different versions of the same concepts to the student?

However, in lesson 7, Jeremy said that test time augmentation would increase the accuracy of the model. This confuses me as I think this method would give us a better understanding of the model (as we test it more effectively) rather than increasing its capability as Jeremy said (since the training remains the same).

I hope you could give me your opinions about it