Tagging @EricPB.

I think Eric is one or the only one on the forums who has tried all of the RTX cards? (He had mentioned that rn he is using a 2060-earlier it were a 2070 and a 2080Ti)

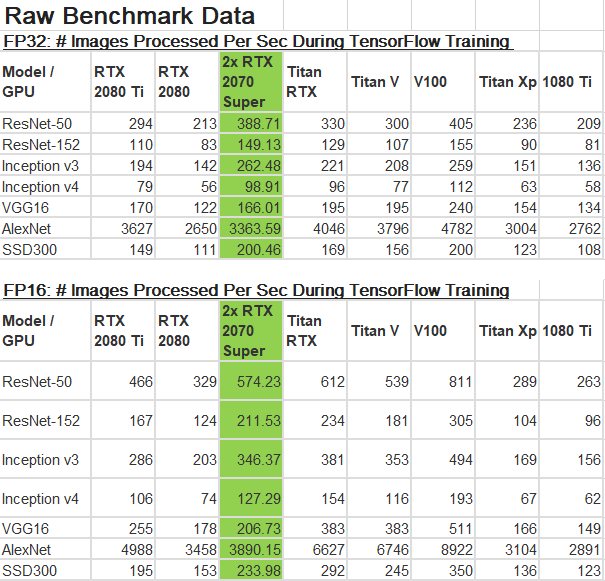

This article also supports the idea that the 2080 TI is about twice as fast as the 1080 TI (though the 2080 may be actually slower!).

Another consideration is that until we get easy ways to share one learning run between multiple GPU’s, you will want a single GPU that is fast.

Initially I thought the 2080 TI was far more expensive than the 1080 TI, but today I’m seeing street prices where it is only 2x more expensive ($1300 vs $500-700)

Are you using fastai under Windows or Linux/Ubuntu ?

Afaik, under Windows native Linux doesn’t support GPU, only CPU computing.

At the base level, Windows supports GPU natively. There’s no GPU support in WSL (bash). Some software isn’t fully compatible such as pickle (PIL). pickle is used in some fastai notebooks. fastai is aware of the issue. pytorch 1.0 and tensorflow are officially supported on Windows.

For more info: Pytorch v1.0 stable is now working on Windows but fastai v1 needs some tweaks to get it work on Windows

Your GPU issue in cats and dogs is being discussed here: Pytorch v1.0 stable is now working on Windows but fastai v1 needs some tweaks to get it work on Windows

Just edited my post with a link, sorry for the confusion.

@PeterKelly Hi Peter, not a problem at all! Everyone learns in their own pace  I was struggling with out-of-memory errors and stuff like that for a long time. So, you know, not too much progress here

I was struggling with out-of-memory errors and stuff like that for a long time. So, you know, not too much progress here

Would be great to finally try an RTX architecture in the field. I have one 2080 (plain) card and still haven’t had enough time to update the drivers and check how fp16 precision works. Have this point on my list and very glad that you and many other people here found this topic worthy of the discussion! I guess this forum is on the best places to talk with Deep Learning practitioners and related software/hardware.

@devforfu

Hi Ilia,

I had done a little test on comparision for 2080Ti Vs 1080Ti run times using fp_16()

If you’re interested, we could have a “fastai fp_16 leaderboard” to compare our scores?

I know @EricPB is already doing some interesting tests on a 2060

For those interested in fp16 and mixed-precision, nvidia has a recent series on tensors and mixed precision:

And an upcoming webinar:

Webinar: Accelerate Your AI Models with AMP - NVIDIA Tools for Automatic Mixed-Precision Training in PyTorch

Join us on February 20th:

• Learn from NVIDIA engineers on real-world use cases for significant speed-ups with mixed-precision training

• Walk through a use case with NVIDIA’s toolkit for Automatic Mixed-Precision (AMP) in PyTorch

• Live Q&A

I created a post with link to some quick and dirty benchmarks, training Cifar-10 & Cifar-100 on two GPUs, the current and cheapest RTX (2060) and the last-gen “King of the Hill” GTX (1080Ti).

If you can use FP16 for the RTX Tensor Cores, it will most likely be faster than the 1080Ti, despite half the price and half the VRAM.

Now should you get 2* RTX 2060 over a single RTX 2080, for the same price, is open for discussion and beyond my pay-grade

Comparing the RTX 2060 vs the GTX 1080Ti, using Fastai for Computer Vision

My take on 2x GPU Vs 1x GPU.

I think this should be a completely different discussion but a few thoughts from me:

My experience: I have a 1x2080Ti air cooled build- Case: Coolermaster H500

I’m against the 2x GPU setup as the potential money saved would be then required to invest into a water cooling solution or blower ed GPU.

In theory 2x Blower GPU would work but then having them setup such that their hot air is exhausted from different locations on the case is a challenge as most of the cases don’t assume that you’d be requiring to blower cool your GPU.

Water cooling/Liquid Cooling needs custom solutions and would require some expertise with hardware.

My take is-given how nervous I get when Ubuntu takes 2 more seconds to boot and I start glancing at my case, it was a more relieving choice to have 1xGPU and some peace of mind over 2x liquid cooled ones.

any new insights on this?

I have now 3000$ and I want to build a workstation.

Any recommendations, on what to build? I want to buy something that will last a couple of years at least

Thank you

$3000 will make quite a decent DL rig.

If you can add another grand, you could build a workstation with a RTX Titan, which is the most capable consumer gpu for deep learning. Almost as capable as the V100, but at a quarter of the price.

But let’s stay with your 3000$. I would recommend:

-

You may want to avoid consumer CPUs, which have just 16 lanes. Buy an used Xeon e5 (40 lanes) or a new threadripper (60-64 lanes). Be aware that Intel castrates MKL (the math library) as it runs on a non-intel cpu. You could try with OpenBLAS, but I have no information about the performances.

-

Buy a motherboard with sufficient number (>= 3) of slots for future expansion.

-

Use NVMe storage.

-

Buy enough ram. Twice the FP16 gpu memory should be enough (example: if you buy a single 2080ti, you will end up with 22Gb of “fp16” vram. So go with at least 48Gb of ram. Realize that x79/x99/x299 chipset do support dual, triple and quad channel, e.g. you can go with 3 or 6 sticks and attain more ram speed than 2/4 sticks).

-

Be aware that having your gpus on 8x gen3 slots won’t penalize you significantly.

-

The gpu having the best price/performance ratio is still, in my opinion, the 1080ti. It is capable to do fp16 (although with a very modest speedup), which means you can effectively double its memory.

That in turn does mean that with 1000$, the price of a single 2080ti, you can buy two of them and have a combined memory of 44Gb for vision. Their speed as they run in paralled will be significantly higher than a single 2080ti.

So, my advice is to go with two 1080ti, spare some money, and add an RTX titan in future. Doing so, you can reckon one grand for the gpu and another grand for the rest. Buy a good psu, in the range of 1000/1200W, and a case with more than 7 slots.

thank you !

I can definitely spare extra 1000$ if it is going to be better than doing double 1080ti and upgrading in the future.

Can you give advice on this one please? I have the money now and I need to spend it on equipment as it comes from EU project.

Does anyone know how the Titan RTX performs with multi-GPU setups? As I understand it, there’s no blower style version of the Titan RTX like there is for the Titan V/2080 ti/1080 ti.

It should perform like any other gpu in terms of performance.

In terms of heat dissipation, you can put two of them in non-adjacent slots and/or go liquid (e.g. nzxt kraken g12 should be compatible, since it is compatible with the 2080ti).

If you have other blower gpus and want to buy just one titan, you can put it in the last slot (counting from above): if should have enough room to breathe.

Do not ever forget to check Tim Dettmer’s advice before building a deep learning rig… https://timdettmers.com/

I strongly agree, Tim is another ‘AI Cool Dude’, a rare breed indeed.

PK OZ

What does everyone think of the Super series?

Seems like 2x 2070 super GPUs for a total of 16gb RAM are roughly the same price range as the Ti for 11gb.

Seems enticing, especially considering the faster clock speeds for both memory and core.