chs820

November 2, 2017, 2:16am

1

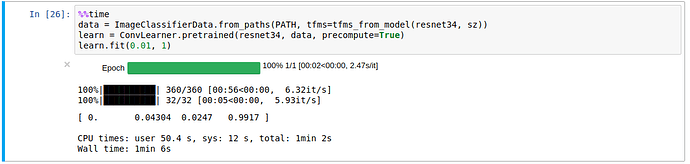

Good evening! I am working through the lesson 1 notebook and just ran the code to train & evaluate dogs vs. cats below:

I know that my 1060Ti is not going to get the same results as Jeremy running it on a 1080Ti, but was wondering if the 1060Ti is really 10x slower, or if there are areas of optimization that I might have missed.

Thanks!

chs820

November 2, 2017, 2:29am

2

Sorry! I didn’t realize this was already being discussed in this thread:

I had the same problem on my DL rig.

First time I ran the code it had to download the resnet34 model to the Pytorch models folder. The first time I thought it was taking too long and killed it before I should have. The failed download froze the function every time. My fix was going to the Pytorch models (~/.torch/models) and removing resnet34 to download it again while getting coffee.

However, like others, I am still confused on the precompute function. It seems that true or false it will run …

jeremy

November 2, 2017, 2:33am

3

This is a separate issue - the 1st time you run it, it has to precompute the activations. If you run it again, it’ll be fast.