Hi thanks for the wonderful course v3, I have just started. I pulled the trigger and bought a cheap 1050ti, which supports the hardware revision of Cuda 6.1. It has 4gb of ram and was very affordable.

However I am getting OOM on some courses. I was not able to do the Amazon satellite.

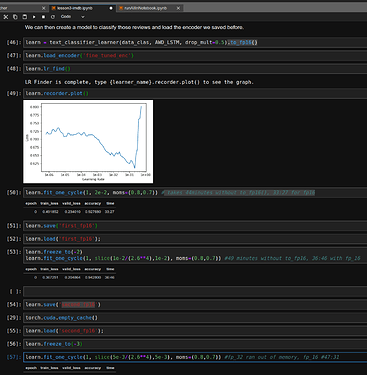

I then got stuck on Lesson 3 IMDB – Out of Memory.

Regarding Lesson 3 IMDB, I tried some tricks to get around it. First I tried to reduce my batch size to 8, then 4, then 2. I almost got to the end but the last training on epoch 6 I got another OOM.

I then tried the “to_fp16()” trick – which seems to work on my 1050TI 4gb of ram. I am so new to this, I don’t understand the benefits or where, if any (because of newer versions of Torch may have been fixed) other parameters to match the to_fp16() trick. It seems to work and I am able to run everything – slightly faster, and at 4gb of ram. It appears that at one point people didn’t believe the to_fp16() trick would work on this card? But it apparently does. I don’t know if I am fooling myself or if it just works.

So I have been looking to get a better card with more memory. So anything with 12GB seems to be out of my budget. I did however find some super cheap K40 – very old cards, for $130 that have 12gb. My performance would be very bad, about 1/4 the speed, and the to_fp16() trick would not work, but apparently PyTorch supports this card. $130 seems so affordable I am very tempted.

But I am also considering the RTX 2070. It is expensive but it is on sale today for $430. Unfortunately it only has 8gb. So I am worried I would be stuck in the same boat because I still won’t have 12gb of ram. But the to_fp16() trick would work. I would have to fully understand the consequences of what to change to move forward and look into the FastAI/PyTorch code, but theoretically I would have effective “16gb” with the to_fp16 trick so to speak. The drawback is $400-500 which is a lot for me right now and I want to fully exhaust all other options.

So I am looking for advice

Should I stick with the GFX 1050 4gb, and use the to_fp16 trick which apparently works?

Should I buy the super cheap K40 with 12gb, it would be slow but I would be able to run the IMDB course? I can switch cards with the PyTorch select GPU option. That would be nice to have two cards.

Should I bite the bullet and get the RTX 2070 and fully figure out the pros/cons of to_fp16() trick moving forward?

The other option is Google CoLab. I’m exploring this as well but I wanted to do local first.

Also I have found K80s for $300 which isn’t bad.

Any advice?