Hello,

i’m writing regarding my other thread: Regression with negative values and tabular_learner

i’ve updated to the bleeding edge version but i still have some questions

if i call

preds, y = learn.get_preds(test)

i got the inference i want to my real test set

but why calling

preds, y = learn.get_preds(DatasetType.Test)

returns an array filled with 0? (yes i know i have instanciated it with 0)

valid_idx = range(len(train_df)-20000, len(train_df))

data = (TabularList.from_df(train_df, path='~//python_test//', cat_names=cat_vars, cont_names=cont_vars, procs=procs)

.split_by_idx(valid_idx)

.label_from_df(cols=dep_var, label_cls=FloatList, log=False)

.add_test(test, label = 0)

.databunch())

from the docs: https://docs.fast.ai/data_block.html#Step-2:-Split-the-data-between-the-training-and-the-validation-set

i have a validation set of 20k rows right?

then i have my add_test filled with 0 right?

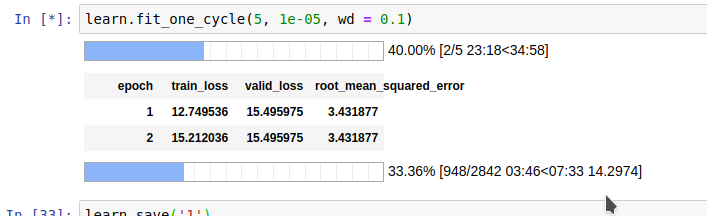

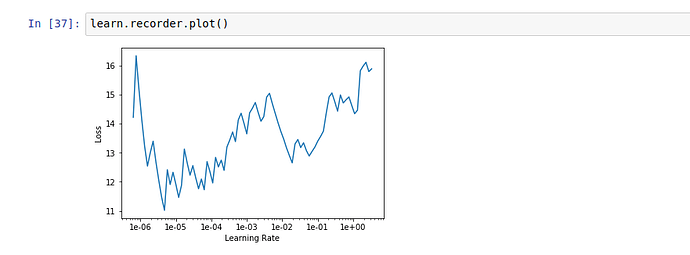

Also in the bleeding edge version the metric root_mean_squared_error works but the lr finder plot is empty

if you use my custum rmse function the plot shows

And above all during learning i have the same validation loss and rmse and this is cumberstone, why? Is it calculated aganist a vector of 0?

The latter question is the most important for me, thanks