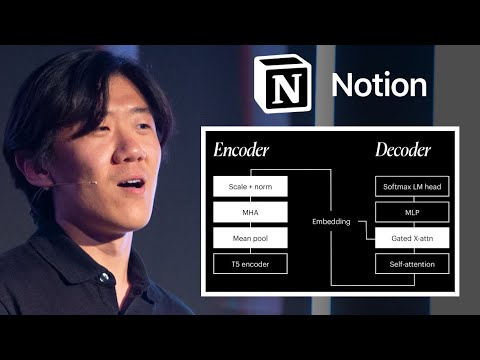

I just watched this YouTube video and was wondering if anyone had any ideas how the person in the video interpolated variations of images between the two input images (and also later adding and subtracting text embeddings)? What does the inference flow / pipeline look like?

how do you go from embeddings back to an image tho?