Hi all

in ML course (2017)

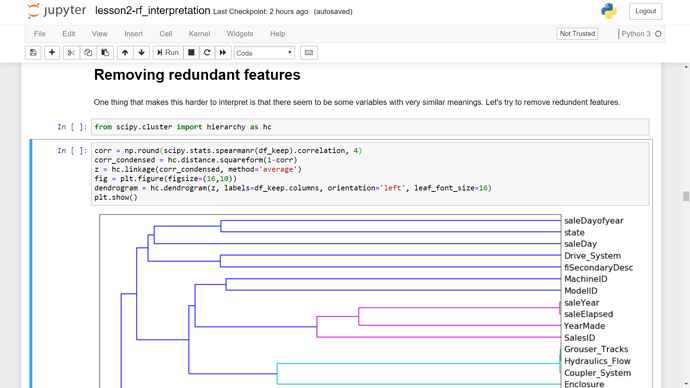

lesson 3 - in rf_interpretation

i tried plotting hierarchical clustering without feature importance (finding the most effective independent features)

i mean instead of just keeping the 30 important columns (first image)

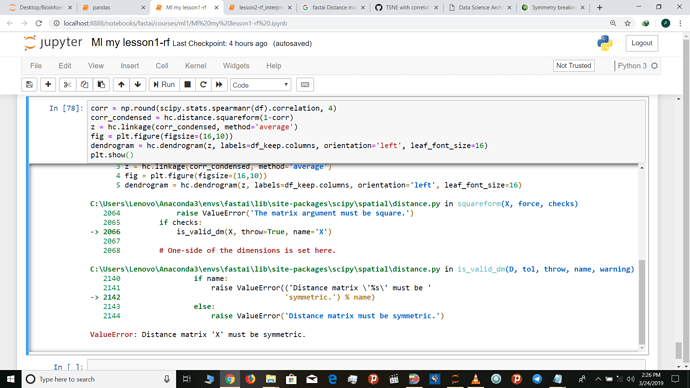

i used all columns (second image)

then i got this error “Distance matrix ‘X’ must be symmetric”

why is that ?

i’m not sure , but i think that error raised because of one or several columns has some problems

or because the number of columns are too many

@sgugger

@PierreO

@init_27

could you please answer this question?

full stack trace

{https://github.com/mahdirezaey/as/blob/master/forums.ipynb }

Can you share the entire stacktrace?

This is my guess of what is happening. I’m not sure though.

I see a lot of invalid value encountered in the code block that raised the error. There are lot of non numeric data in your data frame. You could try encoding them as categorical data.

@BBloggsbott , i think proc_df will do that automatically

turning into categorical and

considering NaN as 0

filling missed in numeric by mean

i have update that (one_hot_encoded now)

could you please check this link again {https://github.com/mahdirezaey/as/blob/master/forums.ipynb}

and steel that error

corr = np.round(scipy.stats.spearmanr(df_trn).correlation, 4)

Based on the docs scipy.stats.spearman returns a correlation matrix. You’ve used .correlation here. This might be because you use a different version.

P.S I haven’t done the fastai course or use the package before. So, I’m just pointing out the possible errors.

Do you see the same error if you put labels=None. ? I am not sure whats going on. In my case I have faced the same error.