Hi!

-

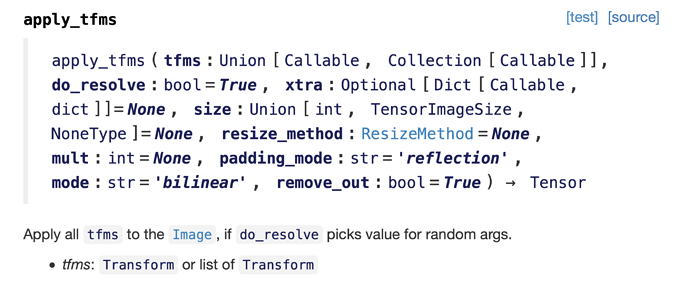

Could anyone clarify how exactly aug tfms are applied to images in a batch in fastai?

My understanding is that, for each item/image in a batch, one aug transform is picked at random and applied to that image, so that for a batch size of 64 we end up with sending 64 augmented images for the model to train on.

Btw, if so, does that mean that the model will never (with prob zero) see the original images (only the augmented versions)? -

More specifically, is it possible (and how) to configure augmentation process so that for each item in a batch we apply a certain (fixed) number of each augmentation from our aug_transforms set. So that, assuming a batch size of 64 and 10 augmentations per image (lets call it an augmentation rate) and 4 transforms in our aug_transforms, during training the model will effectively see (for each batch of 64 images) a total of 64x10x4 augmented images.

The idea is, when having a small training set, to set a rather low batch size (e.g. 2), so that the model weights are updated more often when going through one epoch, while having a significant number of images (2x10x4 images, assuming the augmentation rate of 10 and 4 transforms) in each batch (so that each batch is representative enough of the total population/distribution). -

Finally, what is the best way to figure out something like the above (looking at the source/code, fastai documentation, tutorials?) instead of having people on the forum read through all this?

Thanks!