I’m open to making norm type configurable. I’ll look into it.

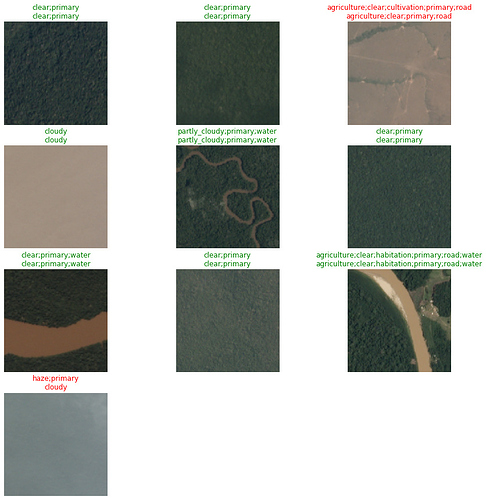

Just found this super cool little API change! I was doing a show_results on the planets dataset and:

Our correct and incorrect predictions are highlighted in red and green! Such a subtle change but it’s fantastic!

Also separate question @sgugger is there a method for utilizing a MultiCategory with using get_y’s such as RegexLabeller? Essentially what I am trying to do is use the sigmoid operation with the PETs dataset and currently (if I am reading how to do multi-label right) it looks like I need to convert everything into a csv first.(I can understand the reasoning behind keeping the csv mandatory for such a task in the sense of you don’t accidentally do it.)

You don’t need to use a csv. Multicategory expect labels to be in a list, so jsut add a transform that puts your label l in [l] then you should be fine.

Awesome! Easy enough to do  Thanks! For those wondering what that looks like, here it is in

Thanks! For those wondering what that looks like, here it is in DataBlock form:

def multi_l(l): return [l]

pets_multi = DataBlock(blocks=(ImageBlock, MultiCategoryBlock),

get_items=get_image_files,

splitter=RandomSplitter(),

get_y=[RegexLabeller(pat = r'/([^/]+)_\d+.jpg$'), multi_l])

This is entirely custom, just want some input to how I did it and how it could be adjusted for a show function. I’m doing multiple keypoints and when I call show_results I get a tensor size mismatch (as currently show_results on a TensorPoint expects one point I believe). Here is how I’m manually doing it now:

x, y = dbunch.one_batch()

with torch.no_grad():

res = learn.model(x)

pt = res[0].view(-1, 2)

a, b = dbunch.decode_batch((x[0], pt.unsqueeze(0)))[0]

ctx = a.show(figsize=(9,9))

b.show(ctx=ctx)

How would I go about adapting this for multiple key-points? Or is there something I’m missing and regular can do the job

For reference, there is a size mismatch error when I do a regular show_results

It’s hard to know what the problem is with no information about how you built your DataBunch and no stack trace. In general, the approach to customize show_batch is to define type-dipsatched version of the show_batch function like in vision.data.

Thanks! I’ll look into it! I’m making it like such:

def img2kpts(f): return path/f'{str(f)}.cat'

def get_points(coords:array):

kpts = []

for i in range(0, len(coords), 2):

kpts.append([coords[i], coords[i+1]])

return tensor(kpts)

def get_y(f:Path):

pts = np.genfromtxt(img2kpts(f))[1:]

return get_points(pts)

def get_ip(img:PILImage, pnts:array): return TensorPoint.create(pnts, img_size=img.size)

dblock = DataBlock(blocks=(ImageBlock, PointsBlock),

get_items=get_image_files,

splitter=RandomSplitter(),

get_y=lambda o: get_y(o.name))

And here is that runtime error log:

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-340-c3b657dcc9ae> in <module>()

----> 1 learn.show_results()

21 frames

/usr/local/lib/python3.6/dist-packages/fastai2/learner.py in show_results(self, ds_idx, dl, max_n, **kwargs)

327 b = dl.one_batch()

328 _,_,preds = self.get_preds(dl=[b], with_decoded=True)

--> 329 self.dbunch.show_results(b, preds, max_n=max_n, **kwargs)

330

331 def show_training_loop(self):

/usr/local/lib/python3.6/dist-packages/fastai2/data/core.py in show_results(self, b, out, max_n, ctxs, show, **kwargs)

82 x,y,its = self.show_batch(b, max_n=max_n, show=False)

83 b_out = b[:self.n_inp] + (tuple(out) if is_listy(out) else (out,))

---> 84 x1,y1,outs = self.show_batch(b_out, max_n=max_n, show=False)

85 res = (x,x1,None,None) if its is None else (x, y, its, outs.itemgot(slice(self.n_inp,None)))

86 if not show: return res

/usr/local/lib/python3.6/dist-packages/fastai2/data/core.py in show_batch(self, b, max_n, ctxs, show, **kwargs)

76 def show_batch(self, b=None, max_n=9, ctxs=None, show=True, **kwargs):

77 if b is None: b = self.one_batch()

---> 78 if not show: return self._pre_show_batch(b, max_n=max_n)

79 show_batch(*self._pre_show_batch(b, max_n=max_n), ctxs=ctxs, max_n=max_n, **kwargs)

80

/usr/local/lib/python3.6/dist-packages/fastai2/data/core.py in _pre_show_batch(self, b, max_n)

70 b = self.decode(b)

71 if hasattr(b, 'show'): return b,None,None

---> 72 its = self._decode_batch(b, max_n, full=False)

73 if not is_listy(b): b,its = [b],L((o,) for o in its)

74 return detuplify(b[:self.n_inp]),detuplify(b[self.n_inp:]),its

/usr/local/lib/python3.6/dist-packages/fastai2/data/core.py in _decode_batch(self, b, max_n, full)

64 f = self.after_item.decode

65 f = compose(f, partial(getattr(self.dataset,'decode',noop), full = full))

---> 66 return L(batch_to_samples(b, max_n=max_n)).map(f)

67

68 def _pre_show_batch(self, b, max_n=9):

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in map(self, f, *args, **kwargs)

360 else f.format if isinstance(f,str)

361 else f.__getitem__)

--> 362 return self._new(map(g, self))

363

364 def filter(self, f, negate=False, **kwargs):

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in _new(self, items, *args, **kwargs)

313 @property

314 def _xtra(self): return None

--> 315 def _new(self, items, *args, **kwargs): return type(self)(items, *args, use_list=None, **kwargs)

316 def __getitem__(self, idx): return self._get(idx) if is_indexer(idx) else L(self._get(idx), use_list=None)

317 def copy(self): return self._new(self.items.copy())

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __call__(cls, x, *args, **kwargs)

39 return x

40

---> 41 res = super().__call__(*((x,) + args), **kwargs)

42 res._newchk = 0

43 return res

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __init__(self, items, use_list, match, *rest)

304 if items is None: items = []

305 if (use_list is not None) or not _is_array(items):

--> 306 items = list(items) if use_list else _listify(items)

307 if match is not None:

308 if is_coll(match): match = len(match)

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in _listify(o)

240 if isinstance(o, list): return o

241 if isinstance(o, str) or _is_array(o): return [o]

--> 242 if is_iter(o): return list(o)

243 return [o]

244

/usr/local/lib/python3.6/dist-packages/fastcore/foundation.py in __call__(self, *args, **kwargs)

206 if isinstance(v,_Arg): kwargs[k] = args.pop(v.i)

207 fargs = [args[x.i] if isinstance(x, _Arg) else x for x in self.pargs] + args[self.maxi+1:]

--> 208 return self.fn(*fargs, **kwargs)

209

210 #Cell

/usr/local/lib/python3.6/dist-packages/fastcore/utils.py in _inner(x, *args, **kwargs)

351 if order is not None: funcs = funcs.sorted(order)

352 def _inner(x, *args, **kwargs):

--> 353 for f in L(funcs): x = f(x, *args, **kwargs)

354 return x

355 return _inner

/usr/local/lib/python3.6/dist-packages/fastcore/transform.py in decode(self, o, full)

181

182 def decode (self, o, full=True):

--> 183 if full: return compose_tfms(o, tfms=self.fs, is_enc=False, reverse=True, split_idx=self.split_idx)

184 #Not full means we decode up to the point the item knows how to show itself.

185 for f in reversed(self.fs):

/usr/local/lib/python3.6/dist-packages/fastcore/transform.py in compose_tfms(x, tfms, is_enc, reverse, **kwargs)

121 for f in tfms:

122 if not is_enc: f = f.decode

--> 123 x = f(x, **kwargs)

124 return x

125

/usr/local/lib/python3.6/dist-packages/fastcore/transform.py in decode(self, x, **kwargs)

60 def use_as_item(self): return ifnone(self.as_item_force, self.as_item)

61 def __call__(self, x, **kwargs): return self._call('encodes', x, **kwargs)

---> 62 def decode (self, x, **kwargs): return self._call('decodes', x, **kwargs)

63 def setup(self, items=None): return self.setups(items)

64 def __repr__(self): return f'{self.__class__.__name__}: {self.use_as_item} {self.encodes} {self.decodes}'

/usr/local/lib/python3.6/dist-packages/fastcore/transform.py in _call(self, fn, x, split_idx, **kwargs)

68 f = getattr(self, fn)

69 if self.use_as_item or not is_listy(x): return self._do_call(f, x, **kwargs)

---> 70 res = tuple(self._do_call(f, x_, **kwargs) for x_ in x)

71 return retain_type(res, x)

72

/usr/local/lib/python3.6/dist-packages/fastcore/transform.py in <genexpr>(.0)

68 f = getattr(self, fn)

69 if self.use_as_item or not is_listy(x): return self._do_call(f, x, **kwargs)

---> 70 res = tuple(self._do_call(f, x_, **kwargs) for x_ in x)

71 return retain_type(res, x)

72

/usr/local/lib/python3.6/dist-packages/fastcore/transform.py in _do_call(self, f, x, **kwargs)

72

73 def _do_call(self, f, x, **kwargs):

---> 74 return x if f is None else retain_type(f(x, **kwargs), x, f.returns_none(x))

75

76 add_docs(Transform, decode="Delegate to `decodes` to undo transform", setup="Delegate to `setups` to set up transform")

/usr/local/lib/python3.6/dist-packages/fastcore/dispatch.py in __call__(self, *args, **kwargs)

96 if not f: return args[0]

97 if self.inst is not None: f = MethodType(f, self.inst)

---> 98 return f(*args, **kwargs)

99

100 def __get__(self, inst, owner):

/usr/local/lib/python3.6/dist-packages/fastai2/vision/core.py in decodes(self, x)

211

212 def encodes(self, x:TensorPoint): return _scale_pnts(x, self._get_sz(x), self.do_scale, self.y_first)

--> 213 def decodes(self, x:TensorPoint): return _unscale_pnts(x, self._get_sz(x))

214

215 #Cell

/usr/local/lib/python3.6/dist-packages/fastai2/vision/core.py in _unscale_pnts(y, sz)

186 return TensorPoint(res, img_size=sz)

187

--> 188 def _unscale_pnts(y, sz): return TensorPoint((y+1) * tensor(sz).float()/2, img_size=sz)

189

190 #Cell

/usr/local/lib/python3.6/dist-packages/fastai2/torch_core.py in _f(self, *args, **kwargs)

256 def _f(self, *args, **kwargs):

257 cls = self.__class__

--> 258 res = getattr(super(TensorBase, self), fn)(*args, **kwargs)

259 return retain_type(res, self)

260 return _f

RuntimeError: The size of tensor a (18) must match the size of tensor b (2) at non-singleton dimension 0

Oh, this error comes from the fact your model doesn’t output predictions that are bs * n_points * 2 but are flattened.

I see. I am creating my model via cnn_learner in which it is getting that behavior.

The outputted size is a [bs, n_points*2]

How should that tensor size instead look?

Ah yes, that’s incompatible with cnn_learner. I guess we need to fix the decode in the pointscaler then, Will do tomorrow.

Thanks!!!

Got a bug I believe with verify_images. No matter what, it will say that it cannot identify image file. I’ve grabbed a URL in the file manually and looked at it and it was perfectly fine, however if I run verify_images it will flag it as not! @pnvijay also noticed this behavior.

Here is the relevant code:

for i, n in enumerate(classes):

print(n)

path_f = Path(files[i])

download_images(path_f, path/folders[i], max_pics=50)

for n in classes:

print(n)

verify_images(path/n, delete=True, max_size=500)

I’m looking as well now. It’s in with PIL Image.

---------------------------------------------------------------------------

OSError Traceback (most recent call last)

<ipython-input-49-9585efa78fe5> in <module>()

----> 1 img = Image.open(path_bb.ls()[0])

/usr/local/lib/python3.6/dist-packages/PIL/Image.py in open(fp, mode)

2816 for message in accept_warnings:

2817 warnings.warn(message)

-> 2818 raise IOError("cannot identify image file %r" % (filename if filename else fp))

2819

2820

OSError: cannot identify image file '/content/data/wolves/wolves/00000015.jpg'

If you tag me, please include the whole stack trace

Getting warmer! @sgugger got the issue finally. When I try to download a URL with show_progress as False I get the following traceback:

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

<ipython-input-126-65db74e53af5> in <module>()

1 download_url(urls[0], Path(f"{0}{suffix[0]}"), overwrite=True,

----> 2 show_progress=False, timeout=4)

/usr/local/lib/python3.6/dist-packages/fastai2/data/external.py in download_url(url, dest, overwrite, pbar, show_progress, chunk_size, timeout, retries)

147 pbar = progress_bar(range(file_size), leave=False, parent=pbar)

148 try:

--> 149 pbar.update(0)

150 for chunk in u.iter_content(chunk_size=chunk_size):

151 nbytes += len(chunk)

AttributeError: 'NoneType' object has no attribute 'update'

Whereas True will work and use a non-corrupted image

This is where the issue is! As I then went through the rest and it worked fine

My code:

def _download_image_inner(dest, inp, timeout=4):

i,url = inp

suffix = re.findall(r'\.\w+?(?=(?:\?|$))', url)

suffix = suffix[0] if len(suffix)>0 else '.jpg'

try: download_url(url, dest/f"{i:08d}{suffix}", overwrite=True, timeout=timeout)

except Exception as e: f"Couldn't download {url}."

def download_images(url_file, dest, max_pics=1000, n_workers=8, timeout=4):

"Download images listed in text file `url_file` to path `dest`, at most `max_pics`"

urls = url_file.read().strip().split("\n")[:max_pics]

dest = Path(dest)

dest.mkdir(exist_ok=True)

parallel(partial(_download_image_inner, dest, timeout=timeout), list(enumerate(urls)), n_workers=n_workers)

Ah yes, it misses a test. Should be fixed now.

Thanks!

One other little tidbit, with multiple keypoints I cannot just do a batch transform, I must include a resize on item_tfms else I get a tensor-mismatch. The temporary is an item_tfm with Resize() to my size

However on the totem pole that can be pretty low

I imagine this issue actually has to deal with multiple key points being loaded in from a model perspective where they keypoints may not be where the image is. If so let me work on it a bit. If you believe otherwise:

IE:

Will not work:

dbunch = dblock.databunch(newPath, path=newPath, bs=32,batch_tfms=[*aug_transforms(size=(120, 160)), Normalize.from_stats(*imagenet_stats)])

Will work

dbunch = dblock.databunch(newPath, path=newPath, bs=32,item_tfms=Resize(120,160), batch_tfms=[*aug_transforms(size=(120, 160)), Normalize.from_stats(*imagenet_stats)])This is only necessary if your images are not all of the same size (and not specific to points: you can only batch tensors of compatible shapes).

So if they’re not and we declare a size in batch_tfms, we still need one in the item_tfms? (Or a seperate resize transformation)